Contents

0%Turning a single product photo into a polished video ad used to require a motion designer, a timeline full of keyframes, and half a day you didn't have. Now, a growing number of AI tools can do it in minutes, but the quality gap between them is massive. If you're searching for the best AI image to video generator, the real challenge isn't finding options; it's figuring out which one actually produces results you'd run as a paid ad or post on a brand account.

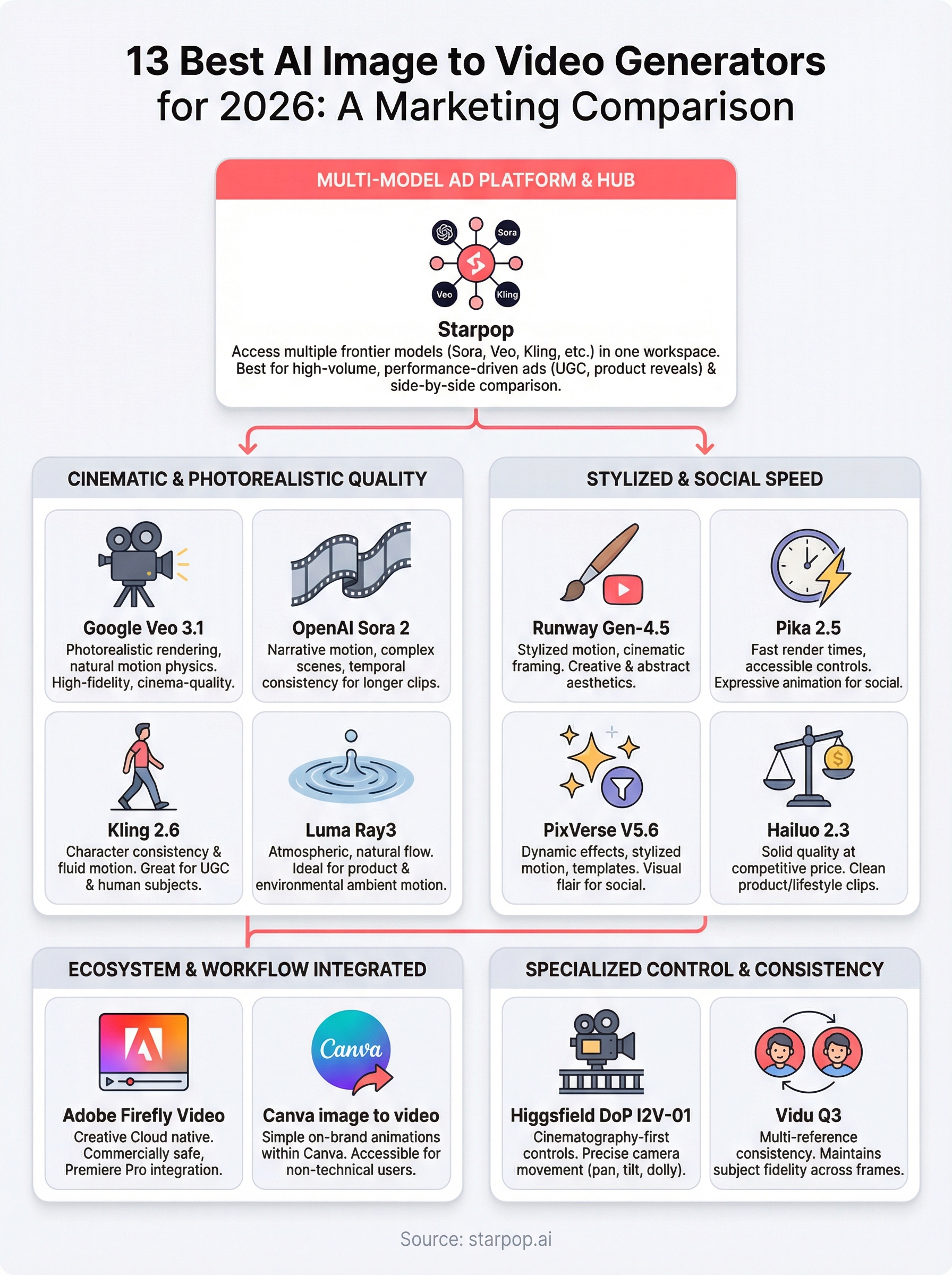

We built Starpop to solve exactly this problem, giving marketers and e-commerce teams access to multiple frontier AI video models (Sora, Veo, Kling, and more) through a single platform purpose-built for ad creation. That hands-on experience testing and integrating these models daily puts us in a strong position to break down what each tool does well, where it falls short, and who it's best suited for.

In this comparison, we evaluate 13 AI image-to-video generators across output quality, motion realism, pricing, and practical use cases for marketing. Whether you need scroll-stopping UGC clips or cinematic product reveals, this guide will help you pick the right tool without wasting credits on the wrong one.

1. Starpop

Starpop is built specifically for marketers and e-commerce teams who need high-volume, high-quality video content without juggling five different subscriptions. Unlike general-purpose AI creative tools, Starpop consolidates top frontier models into one workspace designed around the realities of ad production.

What Starpop is best at

Starpop excels at performance-driven content creation, particularly UGC-style talking-head ads, product reveal clips, and social content designed to convert. If you're running paid ads on TikTok or Instagram and need a steady pipeline of creative variations, Starpop gives you the infrastructure to produce and test them at scale. The platform is also the strongest option when you want to reverse-engineer a viral format: paste a TikTok or Instagram URL into the Video Analyzer tool, and Starpop pulls the scene-by-scene structure so you can rebuild it with your own product.

How Starpop handles image to video

Rather than relying on a single proprietary model, Starpop gives you direct access to multiple frontier video models including Google Veo, Kling, and OpenAI Sora through one interface. You upload your image, write a motion prompt, choose your model, and generate. This is exactly what makes it stand out when you're evaluating the best AI image to video generator options: different models produce different motion styles, and Starpop lets you compare outputs side by side without switching platforms or managing separate billing accounts.

Running the same image through multiple models in one session lets you pick the best output rather than settling for whatever a single tool produces.

What you can control in Starpop

You can adjust motion intensity, camera angle direction, and clip duration depending on the model you select. Starpop also supports batch processing, so you can queue up to 20 image-to-video jobs simultaneously instead of waiting on each render. For teams managing multiple client accounts, role-based access and shared credit pools keep projects organized without requiring separate logins or subscriptions per seat.

Best workflows for ads and social content

The most efficient workflow in Starpop starts with image generation using one of the 225-plus templates, then animates the result using your chosen video model, adds a cloned or generated voiceover, and exports a ready-to-upload clip. Product-holding characters, TED-style talking heads, and cinematic b-roll sequences all have dedicated templates that cut setup time significantly. You can also localize the same clip into multiple languages using the lip-sync feature without re-shooting or re-generating the video base.

Starpop pricing and plan fit

Starpop operates on a subscription model with pooled credits that cover usage across video, image, and audio generation. Because you're accessing multiple frontier models under one plan instead of paying for each separately, the cost-per-asset runs substantially lower than managing individual subscriptions to Sora, Veo, and Kling. Teams producing more than 50 assets per month will see the clearest cost advantage, though solo creators running paid ad campaigns also benefit from the batch processing and integrated workflow.

2. Google Veo 3.1

Google Veo 3.1 is one of the highest-fidelity video generation models available right now, and it shows in the output. It produces smooth, cinema-quality motion from both text and image inputs, making it a strong option for marketers who need visuals that look professionally produced rather than AI-generated.

What Veo is best at

Veo leads on photorealistic rendering and natural motion physics, especially with product shots, outdoor scenes, and anything requiring convincing lighting behavior. If your image contains reflective surfaces, fabrics, or complex backgrounds, Veo animates them with fewer visual artifacts than most competing models at this quality tier.

How Veo image to video works

You feed Veo a source image plus a motion prompt, and the model generates a short video clip, typically between 5 and 8 seconds, that extends the scene in a physically plausible way. The generation pipeline interprets spatial depth from the image and applies motion accordingly, so a product on a shelf will animate differently than the same product held in a hand.

Veo's depth-aware motion engine is one of the clearest technical advantages it holds over most consumer-grade image-to-video tools.

Control options and consistency tools

Veo gives you camera movement instructions such as push in, pan left, and orbit, along with motion intensity controls. Character and object consistency across frames is strong, but Veo performs best when the source image has a clear focal subject rather than a cluttered composition.

Where Veo fits in a marketing workflow

For high-stakes creative assets like hero videos, product launch clips, or brand content where visual quality is non-negotiable, Veo is hard to beat. Rapid iteration at scale is less practical since the per-generation cost runs higher than budget-tier models.

Veo pricing and access

Google offers Veo through Google AI Studio and the Gemini API, with usage billed per second of video generated. Accessing Veo inside Starpop means you use it alongside other frontier models without managing separate API credentials or billing accounts.

3. Kling 2.6

Kling 2.6 is a video generation model developed by Kuaishou, and it has earned a strong reputation for character consistency and fluid motion across short-form clips. For marketers who need to animate product images or lifestyle scenes, Kling delivers results that hold up well at social media resolutions and aspect ratios.

What Kling is best at

Kling performs best when you need human subjects and product characters to move naturally within a scene. The model handles facial expressions, hand movements, and body dynamics better than most tools at a comparable price point, making it a reliable choice for UGC-style content where unnatural movement would immediately undermine credibility.

How Kling image to video works

You upload a source image along with a text motion prompt, and Kling generates a 5-10 second clip that animates the subject according to your instructions. The model reads the composition of your image and distributes motion across foreground subjects, midground elements, and background layers independently, which helps avoid the stiff, uniform movement common in lower-tier tools.

Kling's ability to move foreground and background elements independently is one reason it consistently ranks among the best ai image to video generator options for lifestyle and character-driven content.

Control options and common limitations

Kling gives you camera motion controls and motion amplitude settings, but complex multi-subject scenes can produce inconsistent results. Objects with intricate geometry, such as product packaging with small text, sometimes distort during animation, so running a test render before committing to a full batch is worth the extra credit spend.

Where Kling fits in a marketing workflow

This model fits best in workflows where character-forward creatives are the priority, particularly lifestyle product shots, influencer-style clips, and story-driven social ads where the human element needs to feel authentic rather than synthetic.

Kling pricing and access

Kling offers tiered subscription plans with monthly credit allocations based on usage volume. You can also access Kling through Starpop alongside other frontier models, which removes the need to manage a separate account and billing cycle.

4. Runway Gen-4.5

Runway Gen-4.5 is a well-established model from Runway ML, and it brings a strong combination of motion quality and editing flexibility to the image-to-video space. It consistently delivers smooth, coherent clips that hold up well across a range of subject types.

What Runway is best at

Runway shines when you need stylized motion and cinematic framing rather than strict photorealism. It handles abstract and artistic imagery particularly well, making it a strong pick for brands that lean on visual identity and aesthetics over raw realism. Creative agencies and content studios that prioritize a distinct look over documentary-style accuracy tend to get the most out of it.

How Runway image to video works

You upload a source image and a motion prompt, and Runway generates a clip up to 10 seconds long. The model interprets the composition and applies motion based on your prompt, with a particular strength in translating creative language into visual results. Describing a mood or atmosphere in your prompt tends to produce more expressive output than purely mechanical motion instructions.

Runway's prompt responsiveness makes it one of the more creatively flexible options when you're searching for the best ai image to video generator for brand-forward content.

Control options for motion and editing

Gen-4.5 gives you camera direction controls and motion brush tools that let you isolate which parts of an image animate and which stay still. This level of control is genuinely useful for product shots where you want a background to shift while the product stays sharp and stable.

Where Runway fits in a marketing workflow

Runway works best for hero creative and brand content where visual distinctiveness matters more than generating 20 variations quickly. High-volume iteration is slower and more expensive compared to batch-capable platforms.

Runway pricing and access

Runway offers subscription tiers starting at a free plan, with paid plans unlocking longer clips and higher resolution exports. Credits carry over monthly on paid plans, which adds some flexibility to your budget.

5. OpenAI Sora 2

OpenAI Sora 2 generates video from text and images using a diffusion transformer architecture that treats video as a unified sequence rather than frame-by-frame output. The result is motion that feels more temporally coherent than many competing models, particularly for longer or more complex scenes.

What Sora is best at

Sora 2 performs best on highly descriptive prompts that require narrative motion, such as a product moving through an environment or a subject interacting with a space. It handles scene complexity well, meaning images with multiple elements tend to animate more holistically than they do in single-subject-optimized models. If your creative brief calls for immersive, story-driven video, Sora consistently delivers a cinematic quality that's difficult to match at this tier.

How Sora image to video behaves

You supply a source image and a detailed motion prompt, and Sora generates a clip that extends the scene according to your description. The model interprets spatial relationships in the image and applies motion with strong temporal consistency, keeping objects and subjects recognizable across the full duration of the clip rather than drifting or warping mid-sequence.

Sora's temporal consistency is one of its most practical advantages when you're evaluating the best ai image to video generator for content where visual stability matters across every frame.

Control options and consistency risks

Sora 2 gives you prompt-based motion direction as the primary control lever, but granular camera movement controls are less developed compared to tools like Runway or Veo. Complex product packaging and fine text details can distort during animation, so you should test renders on detail-heavy images before committing to a full batch.

Where Sora fits in a marketing workflow

Sora works best for premium brand content and hero creative where the visual narrative carries weight. High-volume variation testing is better handled through a platform like Starpop that batches multiple model outputs simultaneously.

Sora pricing and access

Sora 2 is available through ChatGPT Plus, Pro, and API access, with generation limits tied to your subscription tier. API users pay per second of video generated, which adds up quickly on longer clips.

6. Luma Ray3

Luma Ray3, developed by Luma AI, is built around a dream machine architecture that prioritizes smooth, physically grounded motion across a wide range of image types. It handles both photorealistic and stylized inputs with consistent results, making it a versatile option for teams that work across different visual styles without wanting to switch tools.

What Luma is best at

Luma Ray3 excels at generating fluid, natural motion from product and lifestyle images, particularly when the scene involves organic movement like flowing fabric, liquid, or ambient environmental elements. If your source image leans toward clean, high-contrast product photography, Luma tends to produce clips that feel deliberate rather than artificially animated.

How Luma image to video works

You upload a source image and a motion prompt, and Luma generates a clip by interpolating motion across the full scene rather than isolating individual subjects. This approach works well for wide-angle and environmental compositions where motion should feel atmospheric rather than mechanical. Clips typically run between 5 and 9 seconds depending on the scene.

Luma's scene-wide motion interpolation makes it one of the stronger picks among the best ai image to video generator options when your creative calls for ambient animation rather than tight subject-focused action.

Control options and output formats

Luma gives you camera direction inputs and motion strength settings, with export options covering standard social media resolutions including vertical, square, and widescreen formats. Fine-grained subject isolation is less developed compared to tools like Runway's motion brush, so complex multi-subject images may require cleaner compositions to get reliable output.

Where Luma fits in a marketing workflow

Luma works best for atmospheric product reveals and ambient brand content where the goal is mood and visual texture rather than precise character motion. High-volume iteration is less practical here, so it fits workflows that prioritize quality over output quantity.

Luma pricing and access

Luma AI offers a free tier with limited monthly generations, with paid plans unlocking higher resolution exports and faster render queues. Accessing Ray3 through Starpop lets you use it alongside other frontier models without managing a separate account or billing cycle.

7. Adobe Firefly Video

Adobe Firefly Video is Adobe's native AI video generation model, built directly into the Creative Cloud ecosystem. If your team already works inside Premiere Pro or After Effects, Firefly's integration removes the friction of moving files between platforms.

What Firefly is best at

Firefly performs best when you need commercially safe, brand-controlled video output. Adobe trains Firefly on licensed content, which means every clip you generate carries content credentials that reduce legal exposure for brands running paid campaigns. This is a meaningful differentiator if your compliance requirements are strict.

How Firefly image to video works

You supply a source image and a motion prompt, and Firefly animates the scene using Adobe's generative model. The output integrates directly into Premiere Pro's timeline, so you can trim, layer, and color grade the result without exporting to a separate editor. Clips typically run between 4 and 8 seconds depending on the scene complexity.

For teams that live inside Adobe's ecosystem, Firefly's native timeline integration is one of the most practical advantages you'll find when comparing the best ai image to video generator options.

Creative controls and style handling

Firefly gives you motion intensity controls and camera direction prompts, along with style reference inputs that help you maintain visual consistency across a campaign. It handles clean product photography and flat-lay compositions reliably, though highly complex multi-subject images can produce less predictable motion results.

Where Firefly fits in a marketing workflow

Firefly works best for brands and agencies that prioritize licensing clarity and Creative Cloud continuity. If your production pipeline already runs through Adobe tools, adding Firefly video generation to that workflow saves significant time on file management and handoffs.

Firefly pricing and access

Firefly Video is available through Adobe Creative Cloud plans, with generative credits included at each subscription tier and additional credits purchasable if you exceed your monthly allocation. Higher-tier plans unlock faster generation and longer clip durations.

8. Pika 2.5

Pika 2.5 is a consumer-friendly video generation model that has built a reputation for fast render times and accessible controls, making it a practical option for creators who need quick turnaround on short-form social content without a steep learning curve.

What Pika is best at

Pika performs best on stylized, expressive animations where the goal is eye-catching motion rather than strict photorealism. It handles cartoon-adjacent aesthetics, product close-ups, and graphic design-heavy images particularly well, giving you clips that feel dynamic without requiring highly detailed prompts.

How Pika image to video works

You upload a source image and a short motion prompt, and Pika generates a clip typically between 3 and 5 seconds. The model applies motion globally across the scene, prioritizing visual energy over precise physical accuracy. This approach works well for bold, fast-cutting social content but can produce less predictable results on detailed product photography with fine text or complex geometry.

Pika's strength is speed and style over technical precision, which makes it a better fit for high-frequency content testing than for premium brand hero videos.

Control options and speed considerations

Pika gives you motion seed controls and basic camera direction inputs, and render times are consistently faster than most frontier models in this comparison. If you need to cycle through multiple creative directions quickly, Pika's generation speed makes it easier to run informal A/B comparisons without spending a full afternoon waiting on renders.

Where Pika fits in a marketing workflow

Pika fits best in early-stage creative testing and high-frequency social posting where iteration speed matters more than cinematic output. If you're looking for the best ai image to video generator strictly for polished paid ads, other models in this list will serve you better.

Pika pricing and access

Pika offers a free tier with limited monthly generations, with paid plans starting at a low monthly rate that unlocks longer clips, watermark removal, and faster render queues for higher-volume users.

9. PixVerse V5.6

PixVerse V5.6 is a video generation model that emphasizes creative effects and stylized motion, positioning itself as a strong option for content creators who want more than basic animation from their static images. The platform has grown steadily in the content creation community thanks to its broad template library and accessible interface, which lower the barrier for teams without deep technical prompting experience.

What PixVerse is best at

PixVerse performs best on dynamic, effects-heavy content where visual flair takes priority over strict physical realism. It handles stylized product shots, bold graphic compositions, and short-form social clips particularly well, producing output that grabs attention in a feed without requiring complex prompt engineering.

How PixVerse image to video works

You upload a source image and a motion prompt, and PixVerse generates a clip between 4 and 8 seconds by applying motion and effects across the scene. The model blends subject animation with post-processing effects like speed ramping and visual overlays in a single pass, which reduces the need for additional editing after export.

If you're testing PixVerse as one of the best ai image to video generator options for social content, its built-in effects pipeline can noticeably cut your post-production time on high-frequency campaigns.

Control options, effects, and templates

PixVerse gives you motion style presets, camera direction inputs, and a library of visual effect templates that you can apply directly to your source image. This template system is genuinely useful for teams that need to maintain a consistent aesthetic across multiple ad variations without rebuilding settings from scratch on each generation.

Where PixVerse fits in a marketing workflow

PixVerse fits best in social-first workflows where visual impact and fast iteration matter more than cinematic realism. It suits brands running TikTok and Instagram content at volume rather than premium brand campaigns where photorealism is critical.

PixVerse pricing and access

PixVerse offers a free tier with daily generation limits, with paid plans unlocking higher resolution exports, watermark removal, and priority rendering for heavier production schedules.

10. Hailuo 2.3

Hailuo 2.3, developed by MiniMax, has gained traction among content creators looking for solid image-to-video quality at a competitive price point. It positions itself as a practical middle-ground option between budget tools and premium frontier models, offering output quality that works reliably for social-first content without the higher cost tier of models like Veo or Sora.

What Hailuo is best at

Hailuo performs best on clean, well-lit product photography and simple lifestyle scenes where subject motion is straightforward. It produces smooth animation on images with a clear focal point and minimal background complexity, making it a reasonable option for brands that need consistent, decent-quality clips at scale without prioritizing cinematic output.

How Hailuo image to video works

You supply a source image alongside a motion prompt, and Hailuo generates a short clip by applying motion across the scene, typically between 4 and 6 seconds. The model interprets your prompt and distributes animation across the primary subject and surrounding elements, though it works most predictably when the image composition is clean rather than layered.

Hailuo delivers the most reliable results when your source image has a single dominant subject against a simple or blurred background.

Control options and typical failure modes

Hailuo gives you basic camera direction inputs and motion intensity controls, but the control set is narrower than what you get from Runway or Kling. Complex scenes with multiple overlapping subjects or intricate product details like small text and fine edges tend to produce visible warping artifacts, so pre-testing on detail-heavy images is worth doing before a full production run.

Where Hailuo fits in a marketing workflow

Hailuo suits workflows where volume and speed outweigh premium visual quality, such as mid-funnel retargeting ads or organic social posts that need frequent refreshes. If you're comparing the best ai image to video generator options for high-stakes paid campaigns, stronger models in this list will serve you better.

Hailuo pricing and access

Hailuo offers a free tier with limited daily generations, with paid plans unlocking faster render speeds and higher resolution exports. The cost per generation stays lower than most frontier-tier models, which makes it worth testing if budget efficiency is a priority in your workflow.

11. Higgsfield DoP I2V-01

Higgsfield DoP I2V-01 takes a different approach to image-to-video generation by centering the experience around cinematography-first controls. Where most tools give you a basic motion prompt and a handful of camera direction presets, Higgsfield builds its interface around the logic of a director of photography, giving you granular control over how a shot moves, breathes, and evolves over its full duration.

What Higgsfield is best at

Higgsfield performs best when you need precise, intentional camera movement rather than AI-interpreted motion. If your source image calls for a specific dolly push, arc shot, or parallax shift that needs to land exactly as planned, this model gives you more deterministic control than most alternatives in this comparison. It suits cinematically minded content creators and brand teams who think in shot language rather than abstract motion prompts.

How Higgsfield image to video works

You upload a source image and select your desired camera movement from a structured set of shot types, rather than relying solely on open-ended text prompts. Higgsfield then generates a clip that executes that movement across the scene with strong spatial coherence, typically between 4 and 8 seconds depending on the shot type selected.

Higgsfield's shot-type selection system gives you more predictable camera results than prompt-only tools when precise movement is non-negotiable.

Camera and motion controls

Higgsfield gives you named camera movement options including push in, pull out, orbit, and tilt, alongside motion speed controls that let you dial in how aggressively the camera moves through the scene. Subject tracking is reliable on images with a single clear focal point, though wide compositions with competing subjects can reduce overall movement accuracy.

Where Higgsfield fits in a marketing workflow

This model fits best in workflows where cinematic camera work defines the creative brief, such as premium product reveals or high-production brand content where the shot design carries the visual story rather than heavy subject animation.

Higgsfield pricing and access

Higgsfield operates on a credit-based subscription model, with plans scaling based on monthly generation volume and output resolution. If you're evaluating the best ai image to video generator with a strong cinematography focus, Higgsfield is worth testing at its entry tier before committing to a higher plan.

12. Vidu Q3

Vidu Q3, developed by Shengshu Technology in partnership with Tsinghua University, is a video generation model built around multi-reference consistency and subject fidelity across the full clip duration. It has earned attention for keeping recognizable characters and objects stable across frames without the gradual drift that undermines longer clips in many competing tools.

What Vidu is best at

This model performs best when you need a specific character, product, or visual element to remain consistent throughout the entire generated clip. Its multi-reference input system lets you supply more than one source image, giving the model additional anchoring points to preserve identity across frames. That makes it particularly useful for recurring character-based ads where the subject needs to look the same from the first frame to the last.

How Vidu image to video works

You upload one or more reference images alongside a motion prompt, and Vidu generates a clip that animates the scene while holding the subject's visual identity stable. The model processes your reference inputs together, using them to reduce the visual drift that typically occurs when a single-frame model has to infer missing information mid-sequence.

Vidu's multi-reference system is one of the more practical solutions to character consistency in this comparison, especially for brands building recognizable visual assets across a campaign.

Frame control and reference options

Vidu gives you start-frame and end-frame reference inputs, which lets you define where the clip begins and ends visually rather than leaving the motion trajectory entirely to the model. This is genuinely useful when you need predictable transitions between two states of a product or scene.

Where Vidu fits in a marketing workflow

For marketing teams prioritizing character-driven continuity and brand consistency, Vidu is a strong candidate, particularly for recurring campaign assets where the same product or spokesperson appears across multiple ad variations. If you're evaluating the best ai image to video generator for maintaining visual identity across a series of creatives, Vidu is worth testing alongside multi-model platforms like Starpop.

Vidu pricing and access

Vidu operates on a credit-based subscription model with plans covering different monthly generation volumes and resolution tiers. Testing it at an entry-level plan before committing to higher volume is a practical way to gauge output quality against your specific content needs.

13. Canva image to video

Canva's image-to-video feature sits inside a design platform most marketers already use daily, which is both its biggest strength and its clearest limitation. It won't replace a dedicated video generation model, but for teams that live inside Canva's ecosystem, it removes the need to export assets just to add basic motion.

What Canva is best at

Canva performs best when you need simple, on-brand animations for organic social content without leaving your existing design workflow. If your team already builds graphics in Canva, the image-to-video capability lets you add motion to those assets directly rather than downloading a static file and re-uploading it somewhere else. It's designed for accessibility over output complexity, which suits non-technical users who need presentable results quickly.

How Canva image to video works

You select a static design or uploaded image inside the Canva editor, choose an animation style, and Canva applies motion across the elements. The process is guided rather than open-ended, meaning you're selecting from pre-defined animation behaviors rather than writing custom prompts. Clip lengths are short, typically between 3 and 5 seconds, and the motion engine prioritizes clean, simple transitions over physical realism.

Canva's animation system favors predictability over creative control, which is the right tradeoff for non-technical teams but a real ceiling for performance marketers.

Templates, formats, and export basics

Canva provides hundreds of animated templates across social formats including Stories, Reels, and square feed posts. Export options cover MP4 and GIF formats, with resolution limits tied to your plan tier. If you're evaluating the best ai image to video generator for sophisticated motion output, Canva's template-based system won't satisfy that need.

Where Canva fits in a marketing workflow

Canva fits best in organic social workflows where speed and brand consistency matter more than cinematic motion. It suits social media managers handling high-frequency posting rather than performance marketers running paid campaigns.

Canva pricing and access

Canva offers a free plan with basic animation features, with Canva Pro unlocking premium templates, higher export resolution, and background removal tools that improve the quality of your source images before animation.

What to use next

Choosing the best ai image to video generator comes down to what you actually need to produce. If your priority is cinematic quality on a single campaign asset, Veo or Sora will serve you better than a batch-capable tool. If you need character consistency across a series, Vidu or Kling are worth testing first. If speed and stylized motion matter more than realism, Pika and PixVerse cut turnaround time significantly.

For most performance marketers and e-commerce teams, the practical answer is to stop picking one model and start running several. Different models produce different results on the same source image, and the only way to find your best output is to compare them side by side without juggling five separate subscriptions.

That's exactly what Starpop is built for. Try Starpop's multi-model image-to-video platform and run your first batch without committing to a single tool upfront.