Contents

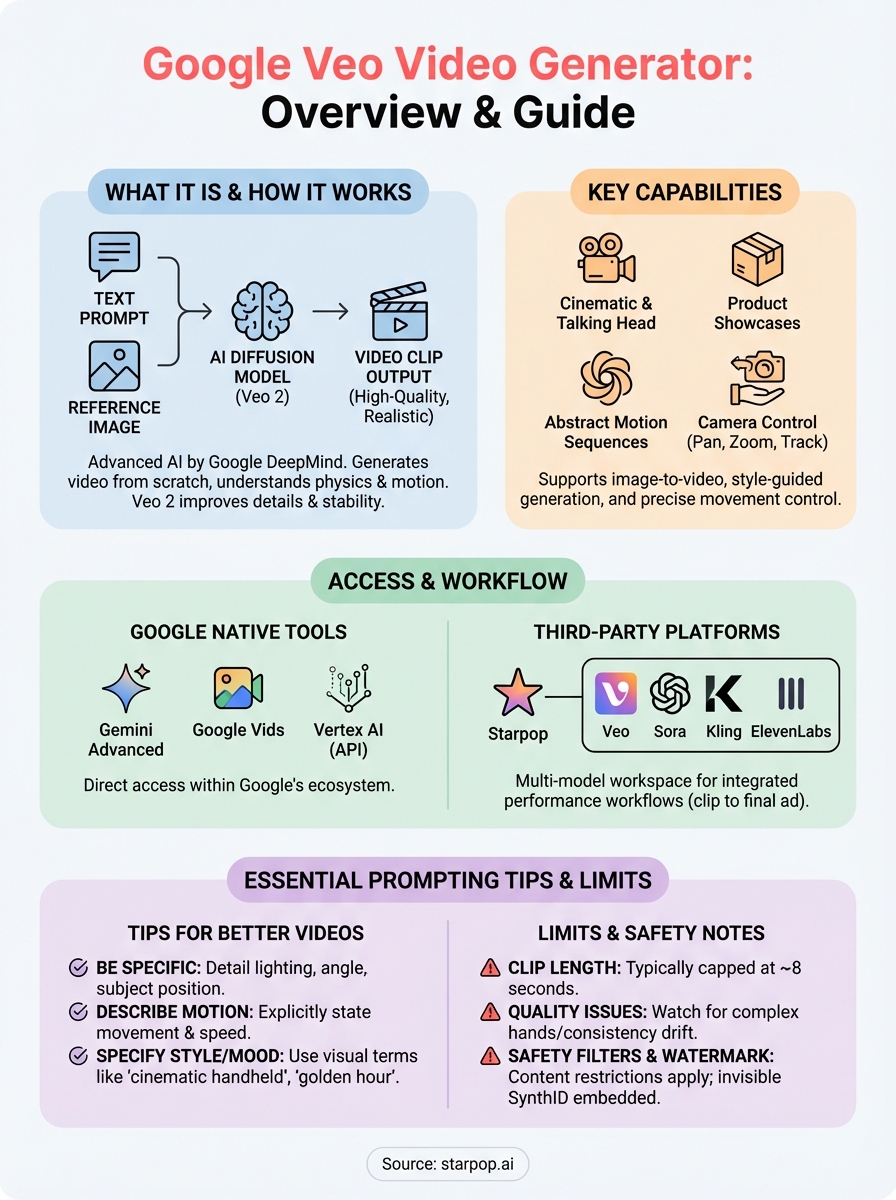

0%Google Veo is Google's most advanced video generation model, and it's changing how marketers and creators produce video content from scratch. The Google Veo video generator turns text prompts and reference images into high-quality video clips, no camera, no crew, no editing timeline. For anyone running ads or building content at scale, that's a significant shift in what's possible without a production budget.

But accessing Veo directly through Google's own tools only tells part of the story. The model is available through platforms like Starpop, where it sits alongside other frontier AI models (Sora, Kling, ElevenLabs) in a single workspace built for performance marketing workflows, from generating a video clip to adding voiceover, lip-syncing, and exporting a finished ad.

This article breaks down what Google Veo actually is, how it works, where you can use it, and how to get the most out of it for creating marketing content. Whether you're evaluating Veo as a standalone tool or looking for the most practical way to integrate it into your content pipeline, you'll walk away with a clear picture of its capabilities and limitations.

What Google Veo is and what it can do

Google Veo is a video generation model built by Google DeepMind. It accepts text prompts, images, or a combination of both as inputs and produces video clips that match the described scene, style, or motion. The google veo video generator runs on the same research infrastructure that powers Google's other large-scale AI systems, giving it a strong baseline for realism and instruction-following.

Veo is not a video editor. It generates video from scratch based on what you describe, which means your prompt quality directly determines your output quality.

The core technology behind Veo

Veo uses a diffusion-based architecture trained on a large dataset of video and text pairs. That training lets it understand relationships between language and visual motion, so when you write "a product on a marble surface with soft studio lighting," the model interprets what that looks like in motion, not just as a static image.

Released in late 2024, Veo 2 added significant improvements in physics simulation and camera motion handling, along with better fine detail retention across longer clip durations. These upgrades make outputs noticeably more stable and realistic compared to the original version.

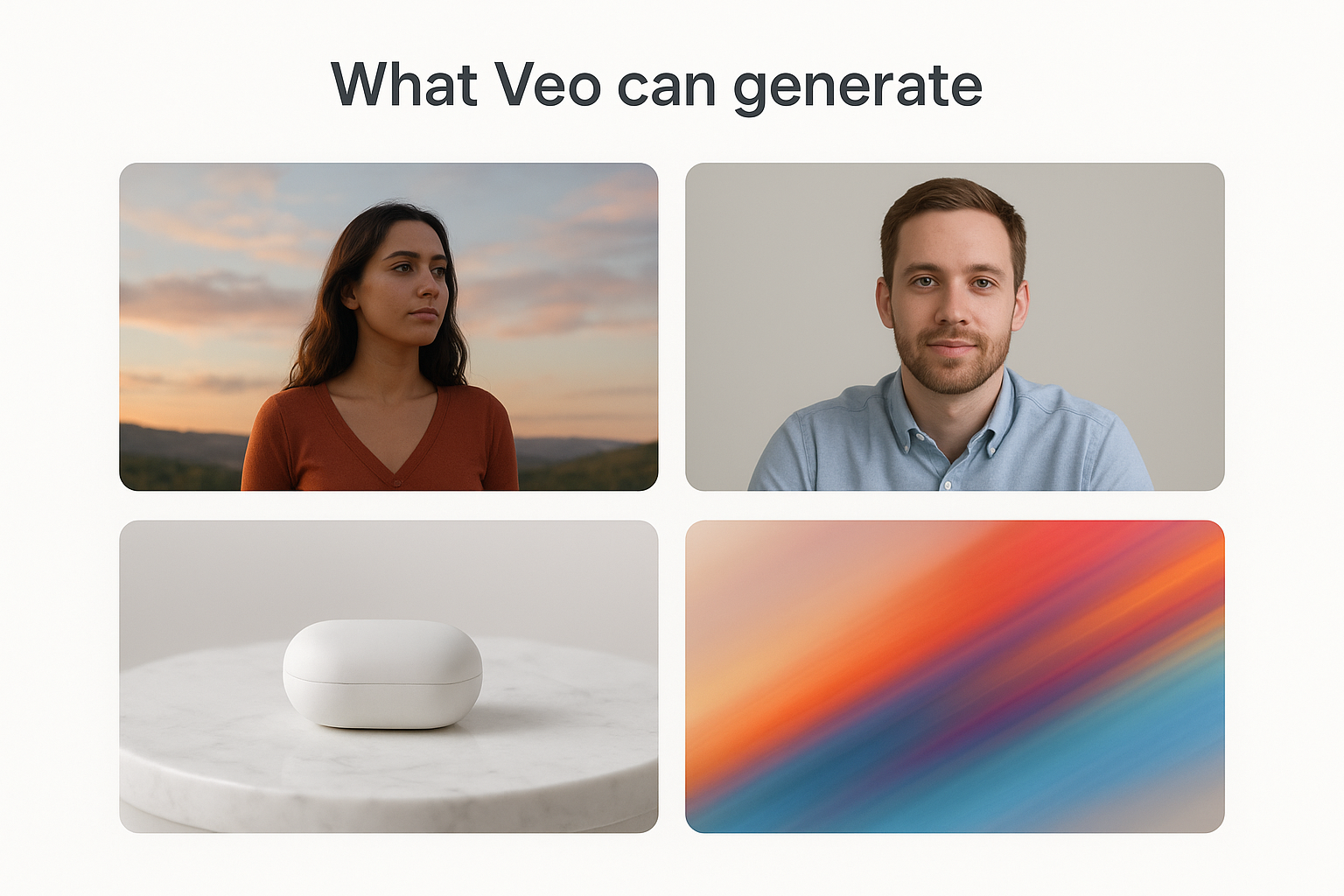

What Veo can generate

The model handles a wide range of output types depending on your prompt and access method. You can generate cinematic clips, talking-head footage, product showcases, and abstract motion sequences. Veo also supports reference image inputs, so you can start with a photo of your product and ask the model to animate it into a full scene.

Here are the main content types Veo supports:

- Text-to-video from descriptive prompts

- Image-to-video animation

- Camera motion control (pan, zoom, tracking shots)

- Style-guided generation (cinematic, documentary, stylized)

Where you can access Veo today

Google has rolled out the google veo video generator across several products, but your access depends on what you're building and which account type you hold. Some entry points are consumer-facing, while others are built for developers or enterprise teams.

Google's native tools

You can use Veo directly through Google Gemini (available at gemini.google.com), where Gemini Advanced subscribers get video generation powered by Veo 2. Google Vids, the AI-assisted video creation tool inside Google Workspace, also uses Veo to generate clips within presentation-style projects. For developers, Vertex AI on Google Cloud provides API-level access to Veo for custom integrations and larger-scale production pipelines.

If you need raw API access or want to control exactly how Veo fits into a larger build, Vertex AI gives you the most flexibility.

Third-party platforms

Several platforms pull Veo into a broader multi-model workspace alongside other frontier tools. Starpop gives you access to Veo within an environment that also includes Sora, Kling, and ElevenLabs, so you can switch models without managing separate subscriptions. That matters if your workflow runs from clip generation through voiceover, lip-sync, and final export all in one place.

How to use Google Veo to generate a video

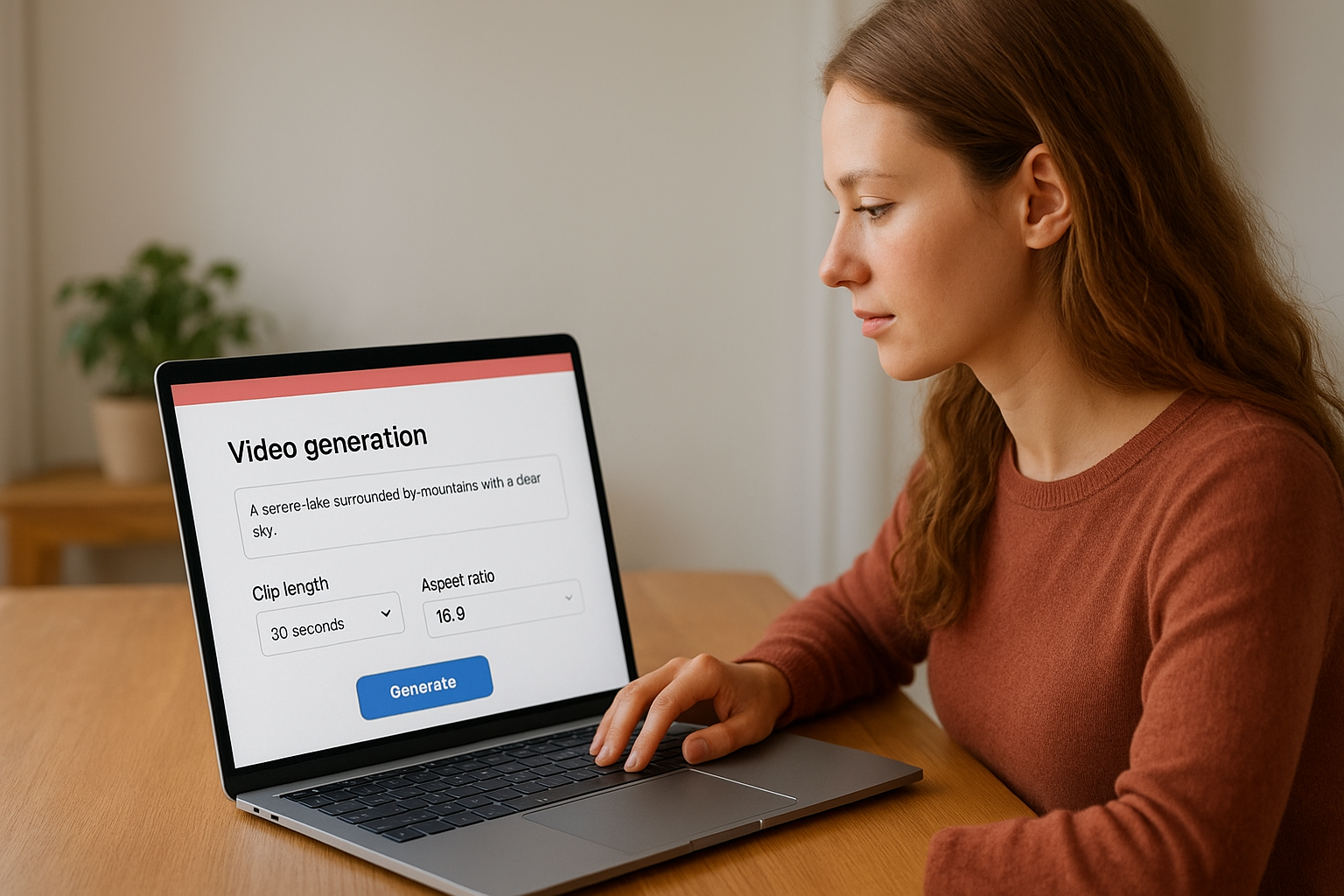

The process for using the google veo video generator is straightforward, but the steps vary slightly by platform. Most entry points share the same basic flow: write a prompt, configure your clip settings, and generate.

Your output quality ties directly to your prompt quality, so invest time in describing the scene clearly before you hit generate.

Using Veo through Gemini Advanced

Open gemini.google.com and confirm you have a Gemini Advanced subscription. Follow these steps:

- Select the video generation option from the prompt interface.

- Type a detailed description of the scene you want.

- Choose your clip length and aspect ratio where available.

- Click generate and wait for the rendered output to load.

Using Veo through a multi-model platform

On platforms like Starpop, you select Veo from the model menu, paste in your prompt or upload a reference image, and pick your output settings. The platform manages the API connection, so no separate technical setup is required.

Once your clip renders, you can immediately feed it into the voiceover or lip-sync tools in the same workspace, which cuts the time between a raw generation and a finished ad.

Prompting tips for better Veo videos

The google veo video generator responds directly to how you describe a scene, so vague inputs produce vague outputs. Before you generate anything, spend a few minutes writing out your scene with specific visual details: lighting conditions, camera angle, subject position, and background environment. That level of detail gives the model a clear target to work toward.

Describe motion and camera behavior

Veo handles camera movement and subject motion much better when you state them explicitly in your prompt. Instead of "a person walking," write "a person walking slowly toward the camera with soft natural light coming from the left." That one adjustment gives the model direction, speed, and environmental context, which is enough to produce a coherent, intentional shot rather than a random one.

The more your prompt reads like a cinematographer's brief, the closer your output will land to what you had in mind.

Use style and mood references

Naming a specific visual style or tone helps Veo anchor the aesthetic of your clip. Phrases like "cinematic handheld footage," "clean product photography style," or "warm golden hour lighting" guide the model toward a consistent look and feel across your output. Avoid vague emotional descriptors like "powerful" or "inspiring" since Veo interprets visual information, not sentiment, and those words add nothing to the scene.

Limits, quality issues, and safety notes

The google veo video generator has real strengths, but it comes with hard limits you should understand before building a workflow around it. Clip length is the most common friction point: most access points cap output at 8 seconds per generation, so longer scenes require planning your content in shorter segments and stitching the results together.

Short clip caps aren't a dealbreaker, but they do require you to storyboard your content in brief, self-contained shots from the start.

Quality issues to watch for

Even with strong prompts, Veo can struggle with complex hand and finger details in close-up shots. Subject consistency can also drift slightly across multi-clip sequences, which becomes visible when you cut clips together. For performance ad formats that rely on precise product close-ups or natural-looking human hands, plan to review every output before using it.

Text rendered directly inside a generated clip also distorts regularly. Add on-screen text in post-production rather than prompting for it, and you'll avoid that issue entirely.

Safety restrictions and content policies

Google applies automated content filters at the generation level, so prompts referencing real people, recognizable brands, or restricted content categories will either fail or return a modified result. Every clip also carries a SynthID watermark embedded by Google, which is invisible to the naked eye but detectable by compatible tools.

Build your content plans around Google's published usage policies from the start, since there's no prompt-level workaround for the filters.

Wrap-up and what to do next

Google Veo is a capable video generation model that turns text and image inputs into polished clips, but your results depend on how well you understand its access points, prompt structure, and content limits. This article covered what Veo does, where to find it, how to use it step by step, and what to watch out for before you commit it to a production workflow.

The google veo video generator works best when it sits inside a larger workflow rather than operating as a standalone tool. If you want to generate a clip and immediately add voiceover, lip-sync it for another market, and export a finished ad in one place, try Starpop, which combines Veo with Sora, Kling, and ElevenLabs under a single subscription. That setup removes the friction of managing multiple tools and separate accounts across your content pipeline.