Contents

0%OpenAI Sora is a text-to-video AI model that turns written prompts into realistic video clips, and it's changed what's possible for anyone creating marketing content. Since its public launch, Sora has become one of the most talked-about tools in AI video generation, attracting everyone from indie creators to full-scale advertising teams.

But cutting through the hype to understand what Sora actually does, how it works under the hood, and how you can get your hands on it takes some digging. Pricing tiers, access restrictions, prompt limitations, and rapid feature updates make it a moving target. This guide breaks all of that down in plain terms so you can decide if Sora fits your workflow, and how to start using it if it does.

At Starpop, we give our users access to Sora alongside other frontier AI models like Google Veo, Kling, and ElevenLabs through a single platform built specifically for marketing content. So we spend a lot of time working with these tools and testing their limits. Below, you'll find everything we know about Sora, what it is, how it generates video, its current capabilities, and the exact steps to access it right now.

What OpenAI Sora is

OpenAI Sora is a generative AI model built specifically to create video from text prompts. You write a description, and Sora produces a video clip that matches your words, including camera movement, lighting conditions, subject behavior, and overall scene composition. OpenAI released Sora publicly in December 2024, integrating it directly into ChatGPT subscriptions rather than launching it as a standalone product. Before that public release, the model existed only as a research preview that a limited group of artists, filmmakers, and red teamers could access to stress-test its outputs and report problems back to OpenAI.

The Technology Behind It

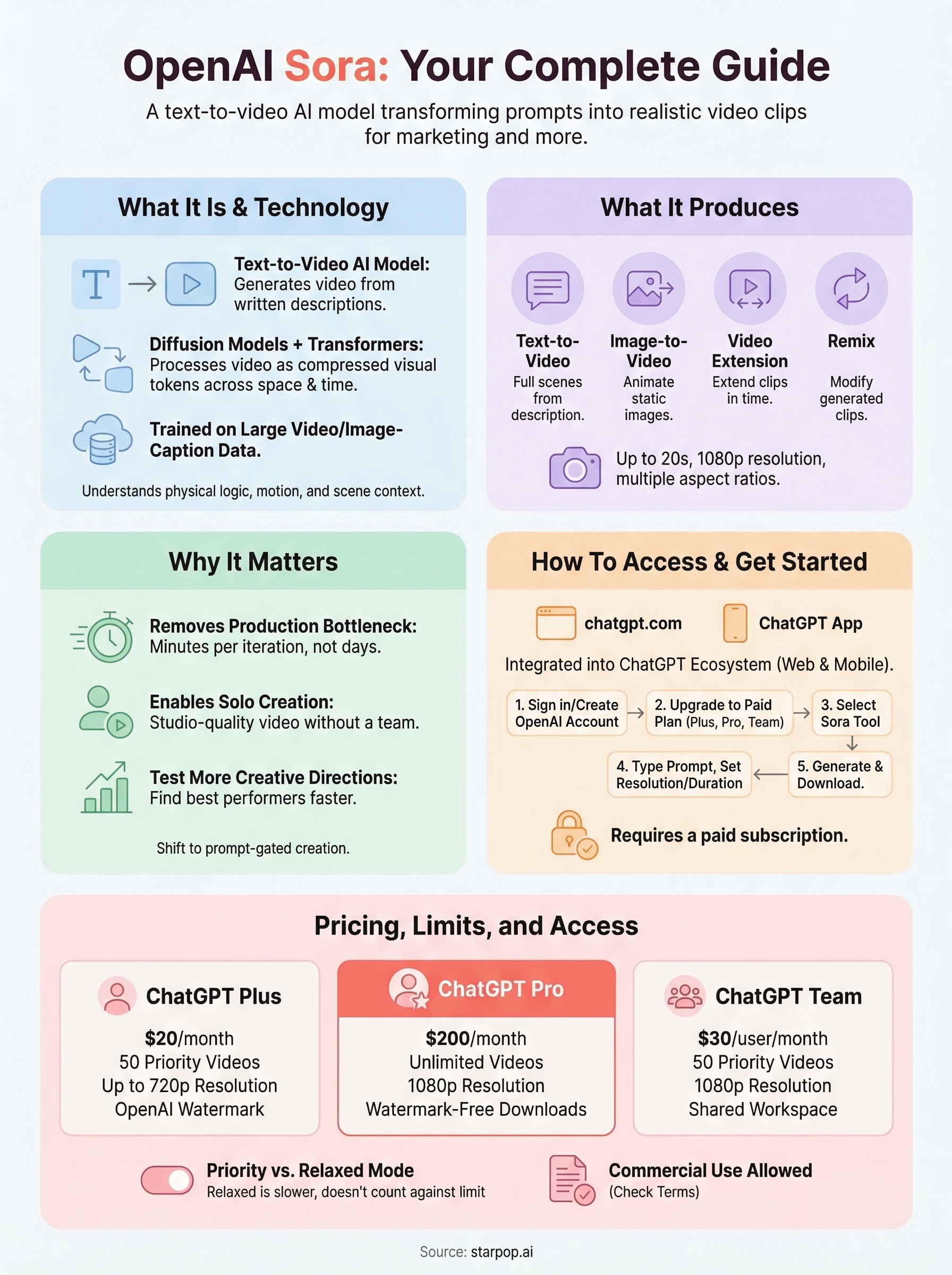

Sora is built on a combination of diffusion models and transformer architecture, which allows it to process video as compressed visual tokens spread across both space and time. This is conceptually similar to how large language models handle text, but applied to motion and visual data instead. The model trains on large volumes of video and image-caption pairs, learning to connect written descriptions with visual patterns that include motion, physics, texture, and realistic lighting behavior.

This architecture is what separates Sora from earlier text-to-video tools that struggled to keep subjects and environments visually consistent from one frame to the next.

Because Sora handles temporal relationships at the architecture level, it generates motion that follows physical logic more reliably than older frame-by-frame generation methods. When you prompt it with something like "a tracking shot of a woman walking through a busy market at dusk, handheld camera," it interprets the camera technique, models the handheld shake, and renders dusk lighting across the crowd with spatial consistency. That kind of scene-level understanding comes from the transformer component capturing long-range context across the full clip, rather than just processing neighboring frames in isolation. The result is video that holds together visually in a way that earlier models simply could not match.

What Sora Produces

OpenAI Sora currently generates videos up to 20 seconds long at resolutions up to 1080p, though exact output limits depend on your subscription tier. It supports multiple creation modes, so you don't always need to start from a blank text prompt. If you already have a product image or an existing scene, you can use that asset as a starting point and animate it forward with additional instructions.

Here's a breakdown of the main output types Sora supports:

- Text-to-video: Full scenes generated from a written description alone

- Image-to-video: A static image animated forward based on a behavior or motion prompt

- Video extension: An existing clip extended forward or backward in time

- Remix: Modifications applied to a generated clip using updated prompt instructions

Each mode gives you a different creative entry point depending on what assets you already have. Starting from an existing product image tends to be faster when you have brand photography on hand. Starting from a text prompt gives you complete control over every element of the scene from the ground up. Knowing which mode fits your specific situation will cut down your production time significantly and help you get usable outputs faster.

Why OpenAI Sora matters

The video production industry has been gatekept by budget for decades. Getting a professional 30-second ad produced meant hiring a director, a camera crew, actors, a location, and an editor. For most small businesses and independent creators, that cost put high-quality video out of reach. OpenAI Sora changes that equation directly by making studio-quality video generation accessible through a text prompt and a subscription.

It Removes the Production Bottleneck

Traditional video production is not just expensive; it is slow. A single shoot can take days to plan, hours to execute, and more hours to edit. Even simple UGC-style content requires coordination between brand, creator, and platform. Sora cuts that entire pipeline down to minutes per iteration, which means you can test more creative directions, find what works faster, and ship content at a pace that matches modern platform algorithms.

The shift from production-gated to prompt-gated content creation is one of the most significant structural changes in marketing video in years.

It Raises the Bar for What You Can Create Alone

Before models like Sora, generating a cinematic tracking shot or a complex environment required either a large budget or a highly skilled team. Now you can describe a scene with precise visual language and get a polished output without any filmmaking background. This matters especially if you're running a lean operation, managing multiple client accounts, or scaling content across several product lines at once.

Consider what this unlocks for performance marketers specifically:

- You can generate dozens of ad variations in a single session to find the best-performing creative

- You can test different visual hooks, settings, and subject styles before committing production budget to any single concept

- You can localize video content for different markets without reshooting anything

These are not marginal improvements. They represent a fundamental shift in how content teams operate, and Sora sits at the center of that shift as one of the most capable models available for video generation today.

How Sora works and what it can create

When you submit a prompt to OpenAI Sora, the model doesn't build your video frame by frame the way older generation tools did. Instead, it works across a compressed representation of the entire clip, handling motion, depth, lighting, and scene continuity as interconnected elements rather than isolated images stitched together. That approach is what makes its outputs feel physically grounded, even in scenes that don't exist in the real world.

The Prompt-to-Video Pipeline

Your text prompt gets broken down into tokens that the model maps against patterns learned from massive volumes of video and image data. From there, Sora iteratively refines a noisy starting state into a coherent visual output, guided by your description at every step. The more specific and visual your language, the more control you have over the result. Prompts that include camera style, lighting conditions, subject behavior, and environment details consistently produce better outputs than vague one-line descriptions.

Writing prompts for Sora is closer to writing a shot list than a search query - the more production detail you include, the more the model has to work with.

What Sora Actually Produces

Beyond standard text-to-video, OpenAI Sora supports several creation modes that make it genuinely useful across different workflow stages. You can animate a static product image, extend an existing clip in either direction, or apply a remix prompt to change the mood, setting, or style of something you already generated. For marketing use cases specifically, this means you can move from a product photo to a finished video ad without starting from scratch each time.

Here are the primary output modes available:

- Text-to-video: Full scene generation from a written description

- Image-to-video: Static image animated with a motion or behavior prompt

- Video extension: Clip lengthened forward or backward in time

- Remix: Style or content changes applied to a generated video

Each mode suits a different stage of content production, so knowing which one to reach for first will save you significant iteration time.

How to access Sora and the official app

OpenAI Sora is not a separate app you download from an app store. OpenAI built it directly into the ChatGPT ecosystem, which means your access path runs through your ChatGPT account rather than through any standalone product. This is an important distinction because many users search for a dedicated Sora application and end up on unofficial sites that have nothing to do with OpenAI.

Always go directly to openai.com or the official ChatGPT app to make sure you're using the real product and not a third-party imitation.

Where to Find Sora

You can reach OpenAI Sora through two official channels: the web browser at sora.com (which routes through OpenAI's platform) or the ChatGPT mobile app available on iOS and Android. On desktop, navigate to chatgpt.com, sign in with your OpenAI account, and look for the Sora option in the left sidebar or tool selector. On mobile, the same account credentials get you in through the ChatGPT app, where Sora appears as a dedicated video creation tool within the interface.

Steps to Get Started

Getting your first video generated takes only a few minutes once your account is set up. Knowing the sequence ahead of time saves you from hitting a subscription wall mid-session, which is a frustrating way to discover that free accounts don't include Sora access.

Here's the exact path to follow:

- Go to chatgpt.com and sign in or create a free OpenAI account

- Upgrade to a ChatGPT Plus, Pro, or Team subscription (Sora requires a paid plan)

- Select Sora from the tool menu in the left sidebar

- Type your prompt into the text field, then set your preferred resolution and video duration

- Click Generate and wait for your clip to render

Once your clip renders, you can download it directly, remix it with a new prompt, or extend it from the same interface. The whole workflow stays inside one browser tab with no external tools required.

Pricing, limits, and common questions

OpenAI Sora is available through three paid ChatGPT subscription tiers, each with different generation limits and output settings. Understanding which plan fits your volume before you subscribe will save you from hitting a wall during a busy production week.

Subscription Tiers and What They Include

The three plans that include Sora access are ChatGPT Plus, Pro, and Team. Plus gives you 50 priority videos per month at up to 720p resolution. Pro removes the monthly video cap and unlocks 1080p output with watermark-free downloads, making it the practical choice for anyone publishing content professionally. Team sits between the two and adds shared workspace features for collaborative environments.

| Plan | Monthly Price | Video Limit | Max Resolution |

|---|---|---|---|

| Plus | $20 | 50 priority videos | 720p |

| Pro | $200 | Unlimited | 1080p |

| Team | $30/user | 50 priority videos | 1080p |

If you're generating content for paid advertising, the watermark-free output on Pro is non-negotiable - Plus tier adds an OpenAI watermark to every clip.

Common Questions About Limits and Usage

One of the most common points of confusion around OpenAI Sora is the difference between "priority" and "relaxed" generations. Priority generations render faster and pull from dedicated compute. Relaxed generations run during lower-traffic periods and take longer, but they don't count against your monthly priority limit on Plus. Switching to relaxed mode when you're not under deadline stretches your monthly allowance further.

Commercial use is another frequent question. OpenAI's current terms allow you to use Sora outputs in paid advertisements, as long as the content meets their usage policies. You keep ownership of what you generate, but OpenAI retains the right to use anonymized prompt and output data for model improvement. Review the full terms directly at openai.com before publishing anything to a client's paid campaign, since policy details can change with platform updates.

Next Steps

OpenAI Sora gives you a powerful starting point for AI video generation, but it works best when you understand exactly what it can and can't do. You now know how the model works, where to access it, which subscription tier fits your output volume, and what to expect from each creation mode. That knowledge puts you ahead of most people who jump in without reading the fine print and hit limits they didn't anticipate.

The next move is to put it into practice. Start with a specific use case, whether that's a product ad, a social clip, or a brand video, and test a handful of detailed prompts before scaling up. If you want to go further and combine Sora with other frontier AI models like Google Veo, Kling, and ElevenLabs inside a single workflow built for marketing content, try Starpop and see how much faster your production cycle gets.