Contents

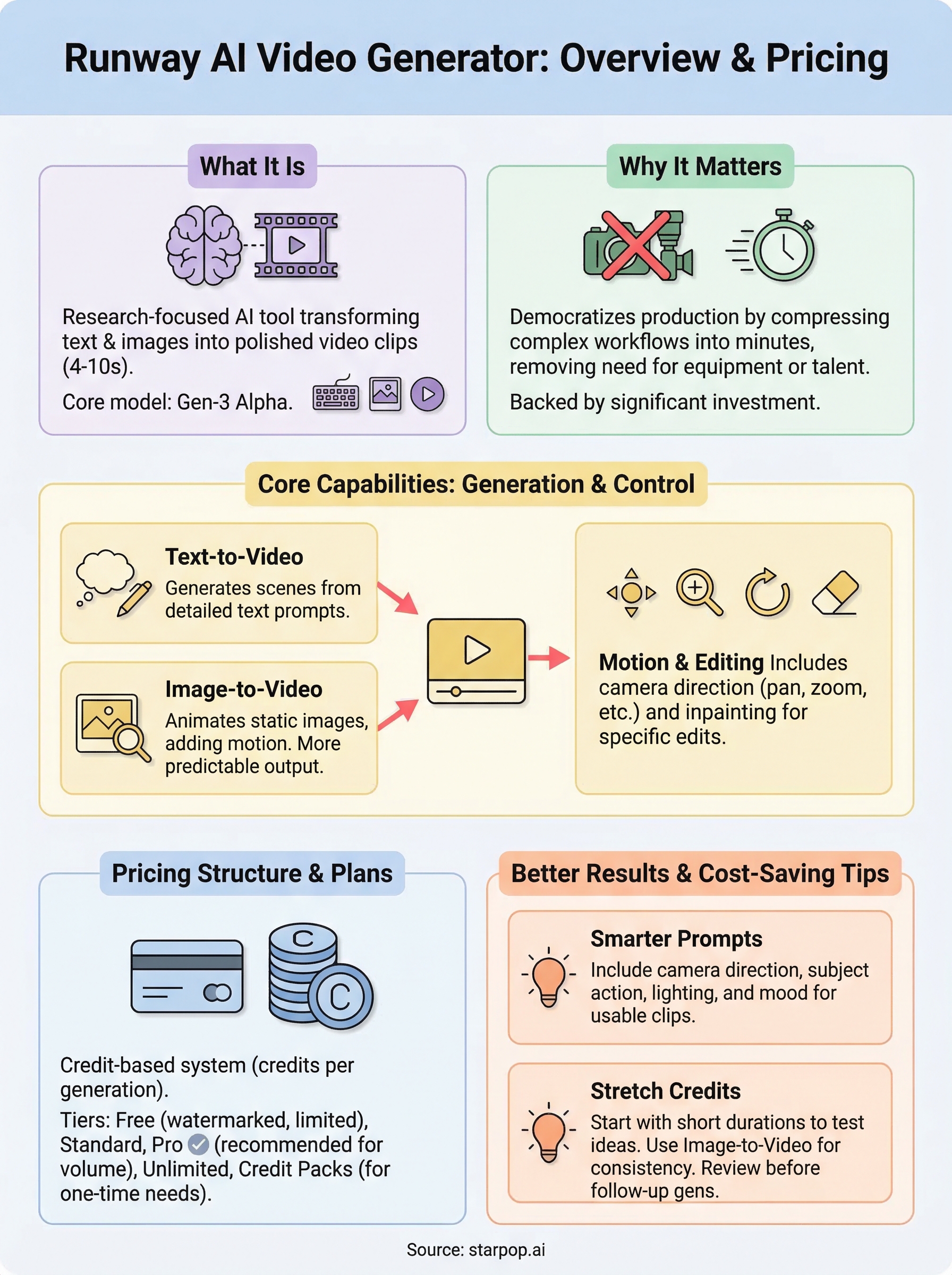

0%The Runway AI video generator has become one of the most talked-about tools for turning text prompts and still images into polished video clips. Whether you're a solo creator experimenting with AI for the first time or a marketing team exploring faster ways to produce ad creative, Runway (often referred to as Runway ML or RunwayML) offers a genuine reason to pay attention. Its Gen-3 Alpha model can generate footage that, even a year ago, would have required a full production setup to pull off.

But here's the part most overview articles skip: Runway is a research-focused platform, not a purpose-built marketing tool. That distinction matters if your goal is to create scroll-stopping ads, UGC-style videos, or localized content at scale. The features are impressive on their own, yet understanding where Runway fits, and where it doesn't, helps you make a smarter decision about which tools deserve your budget.

That's where context from platforms like Starpop becomes useful. Starpop bundles access to multiple frontier AI video models (including options like Sora, Veo, and Kling) inside a single workspace built specifically for performance marketing. So as you read through Runway's capabilities, pricing tiers, and free-use options below, you'll have a clear frame of reference for how it compares to an ad-focused AI workflow. Let's break it all down.

Why Runway matters for modern video creation

Runway launched in 2018 as an AI research company, but it became widely recognized in creative circles after releasing Gen-1, Gen-2, and eventually Gen-3 Alpha, each model producing noticeably better motion quality than the one before. The runway ai video generator now sits at the center of a broader conversation about who gets to produce professional-looking video. That conversation matters because the answer has fundamentally changed.

How AI video shifted the production equation

Before tools like Runway existed, producing even a 15-second promotional clip required renting equipment, booking talent, and carving out editing time that could stretch across multiple days. Runway compressed that entire process into minutes. You type a prompt, upload a reference image if you have one, and the model renders a short clip without a camera operator or location scout in sight. For solo creators and lean marketing teams, that shift removed a real barrier.

The bigger change is not just speed. It is that small teams can now iterate on video creative the same way they iterate on ad copy or static visuals.

Runway also made this workflow accessible to people who have no background in video production, which expanded the pool of who can experiment with motion content in the first place.

Why the industry took it seriously

Runway raised $141 million in a Series C round in 2022 and has continued attracting investment since. That level of backing reflects real industry confidence rather than trend-chasing. Independent filmmakers and commercial studios have both used Runway footage in actual productions, which signals that the output quality clears a professional bar in targeted use cases. For agencies pitching AI-assisted creative to cautious clients, that production track record carries weight.

What Runway AI video generator can do

The runway ai video generator gives you two primary input methods: text-to-video and image-to-video. You describe a scene in a prompt, or upload a still image, and the model builds motion around it. Most clips run between 4 and 10 seconds, which is short but often enough for ad intros, product reveals, or social content cutaways.

Text-to-video and image-to-video generation

With text-to-video, you write a detailed prompt and Gen-3 Alpha renders a scene from scratch. Image-to-video works differently: you supply a reference photo and Runway animates it, adding camera movement and life to something that was static. This second approach tends to produce more predictable results because the model has a visual anchor to work from.

Image-to-video is often the smarter starting point if you want consistent output rather than unpredictable generations.

Motion controls and editing tools

Beyond generation, Runway gives you camera controls such as pan, zoom, and rotation, letting you direct how the virtual camera moves through a scene. You also get access to built-in editing tools including inpainting, which lets you erase and replace specific regions of a frame without regenerating the entire clip from scratch.

Runway pricing and plans explained

Runway builds its pricing structure around a credit system, where each video generation costs a set number of credits depending on the model and clip duration you choose. Knowing this upfront prevents bill surprises at the end of the month.

Free tier access

The free plan gives you a starting credit allowance to test the runway ai video generator without spending anything. However, watermarks appear on every export, and you will burn through the credits faster than you expect if you run more than a handful of generations.

Once your free credits run out, you either upgrade or stop generating, which makes the free tier useful for evaluation purposes but not for sustained production work.

Paid plan breakdown

Runway's Standard plan costs around $15 per month for 625 credits, while the Pro plan runs roughly $35 per month and unlocks 2,250 credits with higher-resolution exports and faster generation queues.

If you plan to produce video at any consistent volume, the Pro plan is the more practical starting point.

The Unlimited plan sits at approximately $95 per month and removes per-generation credit limits for heavy users. Enterprise teams can negotiate custom pricing directly with Runway for larger workloads.

How to use Runway for free or low cost

Getting value from the runway ai video generator without overspending comes down to how deliberately you use your credits. Before you generate a single clip, write out your prompt in full and review it carefully. Vague prompts waste credits on unusable outputs, while specific prompts tend to produce usable results in fewer attempts.

Stretch your free credits further

Your free credits go further when you treat each generation as a planned test rather than an exploratory draft. A few habits that help:

- Use image-to-video instead of text-to-video when you have a reference photo, since it produces more predictable output

- Start with shorter clip durations to preserve credits for testing multiple variations

- Download and review outputs before generating follow-up clips

One well-constructed prompt can save you three failed generations, which adds up quickly on a limited credit balance.

Consider credit packs over subscriptions

If your video production needs are occasional rather than ongoing, Runway lets you purchase one-time credit packs instead of committing to a monthly plan. This option suits freelancers or small teams running a single campaign rather than a continuous content schedule, and it keeps your costs tied directly to actual output.

How to get better results with Runway

Getting stronger output from the runway ai video generator is less about luck and more about how you construct your inputs. The model responds well to specific, structured prompts that describe not just the subject but the lighting, camera angle, and movement you want to see in the final clip.

Write prompts with camera direction in mind

Most users describe only what should appear in the shot and skip the camera behavior entirely. Adding directional language, such as "slow push in" or "overhead tracking shot," gives the model a clearer instruction set and reduces the gap between what you picture and what renders.

Treating your prompt like a brief shot description rather than a caption produces noticeably more usable clips.

Here are the elements worth including in each prompt:

- Subject and action (what is happening and who or what is doing it)

- Lighting conditions (golden hour, studio lighting, overcast)

- Camera movement (static, pan left, zoom out)

- Mood or tone (cinematic, documentary, commercial)

Test with short clips before scaling

Before you run a full-length generation, use a shorter clip duration to validate that your prompt produces the right look. This keeps your credit usage low during the testing phase and gives you a faster feedback loop before committing to longer, higher-cost outputs.

Final takeaways

The runway ai video generator is a genuinely capable tool for producing short, cinematic clips from text prompts and reference images. Its credit system, free tier with watermarks, and tiered paid plans give you a clear entry point regardless of your budget. For experimental projects, one-time credit packs keep costs manageable. For consistent production work, the Pro or Unlimited plans make more financial sense.

That said, Runway is built around research and creative exploration, not performance marketing at scale. If your goal is to produce high-volume ad creative, test multiple UGC formats, or localize videos across languages, you will run into the platform's limits fairly quickly. A purpose-built workflow closes that gap.

Starpop gives you access to multiple frontier AI video models, including tools for talking-head ads, batch generation, and studio-grade lip-syncing, all inside one marketing-focused workspace designed to move faster than a single-model platform ever could.