Contents

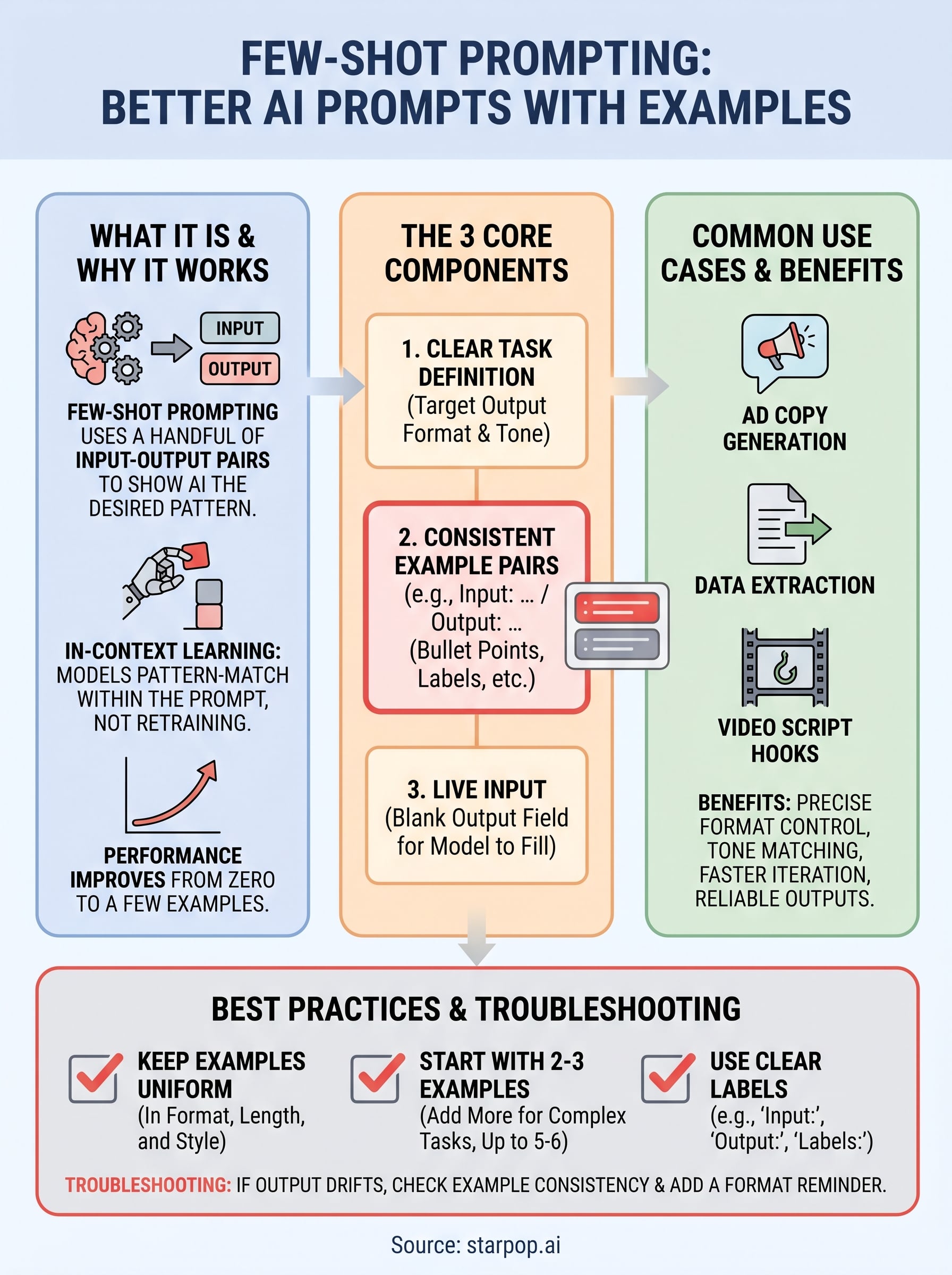

0%You give an AI model a prompt, and the output misses the mark. The format is wrong, the tone is off, or the response ignores half your instructions. Most of the time, the fix isn't a better model, it's a better prompt. Specifically, it's showing the model what you want through few-shot prompting examples instead of just telling it. This technique feeds the AI a handful of input-output pairs before your actual request, so it can pattern-match its way to the right answer.

Few-shot prompting matters whether you're writing scripts, generating ad copy, or building workflows inside tools like Starpop, where AI models power everything from video generation to voice cloning. The more precise your prompts, the fewer rounds of iteration you burn through, and the faster you get to assets that actually convert.

This guide breaks down how few-shot prompting works, walks through ready-to-use templates across common use cases, and covers the best practices that separate sloppy prompts from reliable ones. By the end, you'll have a practical framework for getting consistent, formatted, on-target outputs from any major AI model you work with.

Why few-shot prompting works

Large language models don't follow rules the way traditional software does. Instead, they predict the most likely next token based on everything sitting in their context window. When you write a plain instruction like "write a product description," the model draws on its training data to guess what you want. That guess can go a dozen different directions, which is why outputs feel inconsistent. Few-shot prompting narrows that space by giving the model concrete patterns to match instead of abstract rules to interpret.

In-context learning: the mechanism behind the technique

The term "in-context learning" describes what happens when a model adjusts its behavior based on examples in the prompt rather than through retraining or fine-tuning. You're not changing the model's weights. You're giving it a temporary reference frame that shapes how it processes your request. This is fundamentally different from training a custom model, and it's what makes few-shot prompting practical: no data pipelines, no compute costs, no waiting. You structure your prompt correctly, and the model figures out the pattern.

The model isn't being retrained when you add examples. It's recognizing a pattern and extending it, which means your examples are doing the real instructing.

Research from Google and OpenAI published around the development of models like GPT-3 and PaLM consistently shows that model performance improves significantly as you move from zero examples to one to a few. The gains level off after roughly five to eight examples, but the jump from zero to even two or three is substantial across tasks ranging from classification to creative writing.

Why examples outperform written instructions alone

One of the clearest things you'll notice when you study few shot prompting examples side by side is how powerfully the format of your examples shapes the format of your output. If your examples use bullet points, the model outputs bullet points. If they follow a label structure like "Input:" and "Output:", the model mirrors that exactly. Tone, length, and structure all transfer from your examples to the response without you needing to describe any of them explicitly.

Writing format instructions in plain language is surprisingly hard to do precisely. Saying "keep responses concise" means different things to different models and different things depending on the topic. Showing a concise response removes that ambiguity entirely. The model sees the target length and matches it. The same logic applies to tone: a formal example produces a formal output, and a conversational example produces a conversational one. Your examples handle the instructing that written descriptions often fail to do on their own.

The role of consistency across your example set

Your examples don't just teach format individually. They establish a consistent pattern across the full set, and the model treats that consistency as a signal of what's expected. If three out of four examples follow one structure but one outlier breaks the pattern, the model may average the signal rather than lock onto the dominant one. Keeping your examples uniform in format, length, and style isn't just tidy organization, it's a direct input to output quality. Inconsistent examples produce inconsistent results, and that's one of the most common failure modes people hit when they first try this technique.

How to write a few-shot prompt step by step

Building a few-shot prompt is straightforward once you treat it as a structured process rather than guesswork. Every effective prompt has three moving parts: a clear task definition, a set of example input-output pairs, and a live input for the model to respond to. Getting those parts right, and in the right order, is what separates prompts that work consistently from ones that produce unpredictable results.

Step 1: Define your task and target output format

Before you write a single example, decide exactly what output you want and what it should look like. Ask yourself what format the response should follow, how long it should be, and what tone it needs to carry. Locking this down first means your examples can model the answer rather than accidentally teaching the model something you'll need to correct later. A one-sentence target description in your own words helps: "I want a three-line product hook in a direct, confident tone."

Step 2: Write your example pairs

Your examples are the core of any working few shot prompting examples setup. Write at least two and ideally three to five input-output pairs that reflect real tasks you want the model to handle. Each pair should follow the same structure, whether that's a simple "Input: / Output:" label format or a more conversational pattern. Consistency across all pairs signals the pattern to the model, so resist the urge to vary length or structure between examples even slightly.

Your examples teach by demonstration, so every detail in them, including punctuation and spacing, becomes part of what the model learns to replicate.

Step 3: Order your examples and add the live input

Put your strongest and most representative example first, since models weight earlier context heavily. Follow it with any examples that cover edge cases or slight format variations. After the last example, add your live input using the exact same label or structure you used in your examples, but leave the output field blank. The model fills it in by extending the pattern you established, which is the whole mechanism at work.

Few-shot prompting templates you can copy

The fastest way to apply few shot prompting examples in your own work is to start with a tested template and swap in your actual content. Each template below uses a consistent "Input: / Output:" label structure that runs reliably across major models including GPT-4, Claude, and Gemini. Adjust the number of example pairs up or down depending on task complexity, but always include at least two before you add the live input.

Template 1: Tone and style matching

Use this template when you need the model to match a specific brand voice without writing out a detailed style guide. Two or three examples written in the voice you want are enough for the model to replicate it on demand across new inputs.

System: You are a copywriter. Match the tone and style of the examples below.

Input: Describe our new espresso machine.

Output: Small counter space, bold flavor. This machine pulls a proper shot in under 30 seconds with no learning curve.

Input: Describe our travel backpack.

Output: 40 liters, carry-on approved, and built to take a beating. Pack everything, check nothing.

Input: [Your product description task here]

Output:

Template 2: Structured classification

This template works well for sorting inputs into fixed categories reliably, whether you're tagging customer feedback, labeling ad creative formats, or organizing product descriptions.

Adding a label key at the top of your classification template reduces misclassification, especially when category names could overlap in meaning.

Including a brief label definition anchors the model's understanding before it sees any examples. That small addition makes the output more consistent across varied or ambiguous inputs.

Labels: Positive, Negative, Neutral

Input: "Shipping was faster than expected."

Output: Positive

Input: "The color looked different from the photos."

Output: Negative

Input: "Package arrived on Tuesday."

Output: Neutral

Input: [Your text here]

Output:

Few-shot prompting examples for common tasks

These few shot prompting examples show how the technique applies across real marketing and content tasks. Each one follows the same labeled structure from the templates above, so you can plug them directly into any model you're working with. The goal is to show you what the pattern looks like in practice, not just in the abstract.

Writing ad copy in a specific format

Ad copy is one of the highest-value places to use few-shot prompting because format matters as much as the words themselves. You need a specific structure like a hook, benefit line, and call to action, and writing that out in plain instructions rarely produces it reliably. Two well-chosen examples lock in the structure faster than a paragraph of directions ever could.

Input: Sell a portable blender to gym-goers.

Output: Hook: Your post-workout shake shouldn't wait. Benefit: Blends 20oz in 30 seconds, fits your gym bag. CTA: Grab yours before your next session.

Input: Sell a blue light filter to remote workers.

Output: Hook: Eight hours of screen time leaves a mark. Benefit: Cuts blue light by 90% without color distortion. CTA: Protect your eyes starting tonight.

Input: [Your product and audience here]

Output:

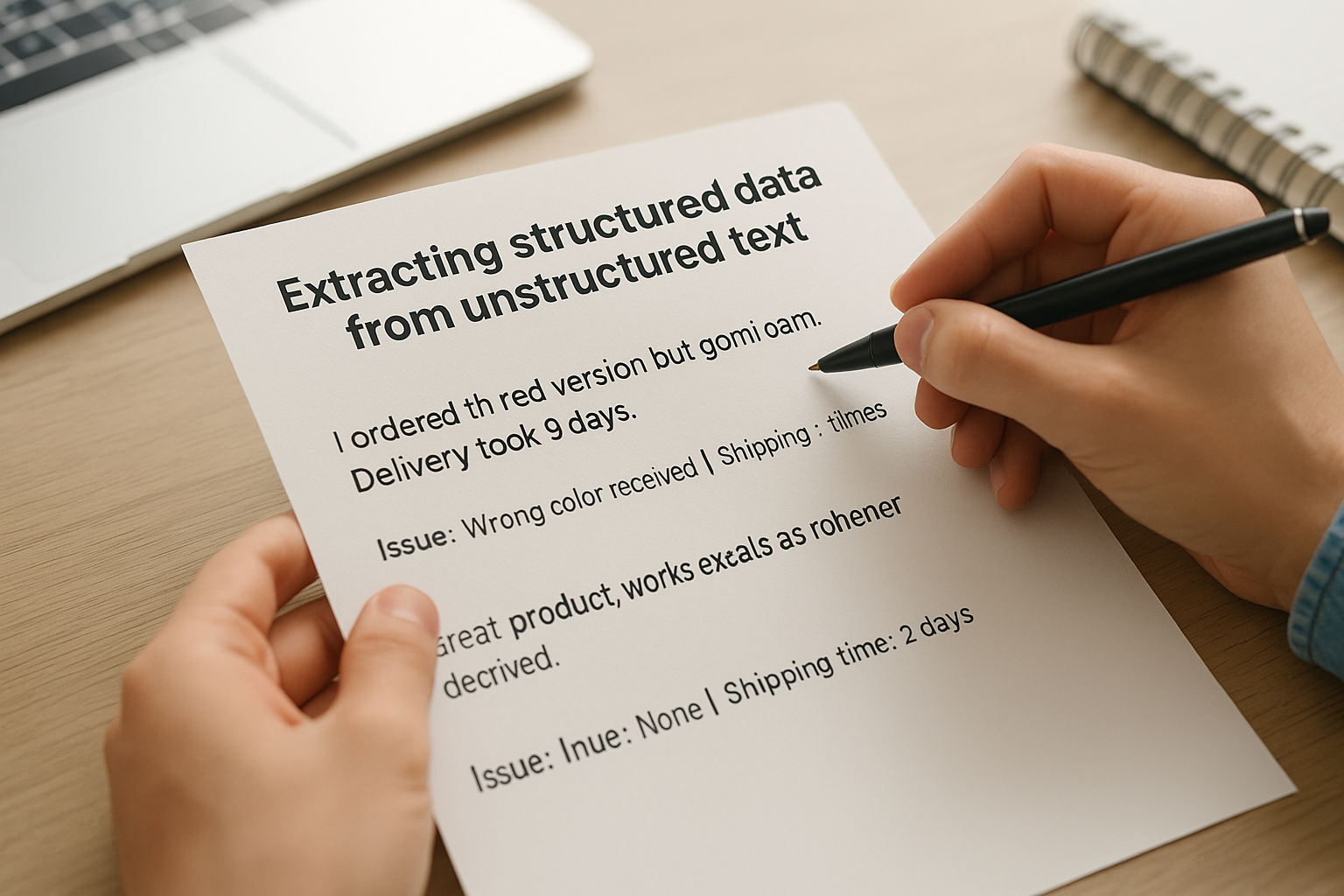

Extracting structured data from unstructured text

Pulling specific fields out of messy text is a task most marketers hit when working with customer reviews, form responses, or scraped content. A few examples teach the model exactly which fields to extract and how to format them without you needing to write a parsing script.

The cleaner your example outputs are, the more reliably the model replicates the structure across varied and ambiguous inputs.

Input: "I ordered the red version but got blue. Delivery took 9 days."

Output: Issue: Wrong color received | Shipping time: 9 days

Input: "Great product, works exactly as described. Arrived in two days."

Output: Issue: None | Shipping time: 2 days

Input: [Your review or response text here]

Output:

Writing video script hooks

Short-form video hooks need a specific rhythm: one sharp line that creates tension or curiosity in under three seconds. Showing the model two or three strong hooks teaches it the pacing and sentence-level structure better than any written definition of "engaging" could.

Input: Product: posture corrector. Audience: office workers.

Output: You've been sitting wrong for years, and your back already knows it.

Input: Product: budget meal prep container. Audience: college students.

Output: You're spending $300 a month on food you don't even enjoy.

Input: [Your product and audience here]

Output:

Troubleshooting and best practices

Even well-structured few shot prompting examples break down in predictable ways. Knowing where they fail and why lets you fix problems in minutes rather than rebuilding your prompt from scratch. Most issues trace back to one of three causes: inconsistent examples, too few examples for a complex task, or a mismatch between what your examples demonstrate and what your live input actually asks for.

When your outputs drift from the expected format

Format drift happens when the model stops following the structure your examples establish, usually after several turns in a conversation or when the live input looks significantly different from your examples. The fix is almost always to check your example consistency first before assuming the model is at fault. If any example in your set uses a different label, length, or punctuation pattern, remove or standardize it. After that, add a direct format reminder in a short system instruction at the top of the prompt, something like "Always follow the exact format shown in the examples below." That combination handles the majority of drift cases.

If your examples don't all look identical in structure, the model will treat the variation as intentional and average its output across the patterns rather than picking one.

Picking the right number of examples

More examples are not always better. Two to three examples cover most straightforward tasks like tone matching, classification, or short-form copy. Complex tasks with multiple output fields or conditional logic benefit from four to six examples that cover distinct input scenarios. Going past six rarely improves results and eats into the context window you may need for a longer live input. Start with two and add one example at a time, testing after each addition, rather than building a large set upfront.

Keeping your prompts maintainable

Prompts that work today need to keep working as your tasks evolve. Store your example sets in a shared document or workspace organized by task type so your team can update examples without rebuilding the full prompt. When you update a product line or shift brand voice, revise your examples first and treat them as living reference material rather than a one-time setup. That habit pays off at scale when you're running high-volume content workflows across multiple campaigns.

Next steps

You now have everything you need to build consistent, reliable prompts using the few shot prompting examples and templates in this guide. The core skill is simple: show the model what you want instead of describing it in the abstract, keep your examples consistent, and adjust the number of pairs based on how complex your task is. Applying this framework across ad copy, classification, data extraction, and script writing will cut your iteration time significantly and give you more predictable outputs across any major model you use.

Start by picking one task you repeat often and build a two-example prompt for it today. Test it, refine the examples based on what drifts, and save the final version for reuse. If you create high-volume marketing content across video, images, and audio, explore Starpop's AI content creation platform to see how sharper prompts translate directly into faster, better creative output at scale.