Contents

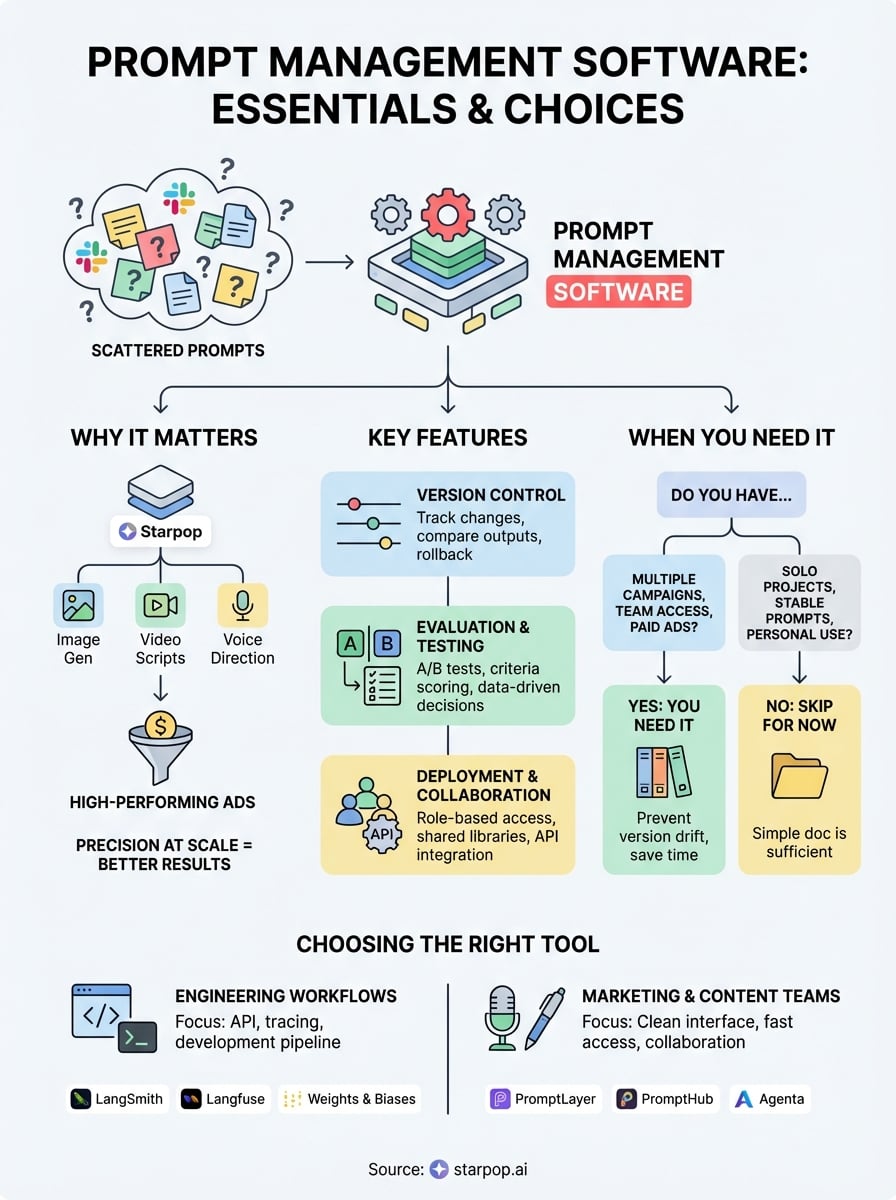

0%Every AI-powered workflow starts with a prompt. And once you move past casual experimentation into production, running ads, generating video scripts, localizing content across markets, those prompts multiply fast. You end up with dozens of variations scattered across documents, Slack threads, and sticky notes. Prompt management software solves this by giving teams a structured way to store, version, test, and share prompts across projects and models.

This matters especially if you're working with platforms like Starpop, where a single campaign might involve image generation prompts, video scene descriptions, voice direction, and localization instructions running through multiple AI models simultaneously. The difference between a high-performing ad and a forgettable one often comes down to prompt precision, and you can't maintain that precision at scale without a proper system to manage it.

So which tools actually help, and which ones just add another login to your stack? This guide breaks down the best prompt management software available right now, compares their core features, and walks you through what to look for based on your team size, use case, and budget. Whether you're a solo creator testing ad variations or an agency managing prompts across dozens of client campaigns, you'll find a clear path to picking the right tool.

What prompt management software does

A prompt is just text until it hits a model. But behind that text sits a set of decisions: which version of a phrase worked best, which model it was tuned for, who approved it, and whether it still performs the way it did last month. Prompt management software handles all of that infrastructure so you can focus on creating, not hunting through folders for the right file. Think of it as version control, testing lab, and team workspace rolled into one system built specifically around AI prompts.

Storing and organizing prompts at scale

When you first start using AI tools, a simple document works fine. You write a prompt, it works, you move on. But the moment you're running multiple campaigns across different models, that document becomes a liability. You lose track of which prompt was for which product, which variation outperformed the others, and what context was attached to each one. The folder system breaks down fast when you're generating video scripts, image descriptions, and voice direction simultaneously.

Prompt management tools solve this by giving every prompt a defined home in a structured, searchable library. Each entry gets a name, tags, metadata, and a full history. You can filter by project, model, date, or team member. If you're using a platform like Starpop to generate video assets for five different product categories, you can store each category's prompts separately, label them by creative format, and pull the right one instantly when a new campaign kicks off. Nothing falls through the cracks.

Versioning and testing across models

One of the most practical things prompt management software does is track changes over time. When you adjust a prompt, the system saves the previous version automatically. That means you can compare outputs side by side, roll back to an earlier version when something breaks, and identify exactly what changed between a prompt that underperformed and one that drove real results.

Versioning turns prompt iteration from guesswork into a repeatable process you can actually learn from.

Testing is the other half of this equation. Most platforms let you run A/B evaluations directly inside the tool, where you send two prompt variants to the same model or the same prompt to multiple models and compare the outputs. This matters especially when you're optimizing creative assets for paid performance, where small wording differences in a video script or product description can shift conversion rates in a measurable way. You are not guessing what works; you are running controlled comparisons.

Beyond A/B testing, you can define evaluation criteria ahead of time, whether that is tone consistency, factual accuracy, output length, or a custom scoring rubric. The platform then grades each output against those criteria so your team makes decisions based on structured data rather than personal preference.

Deploying and collaborating across teams

At a certain scale, prompts stop being a personal tool and become a shared organizational asset. A performance marketer, a copywriter, and a video producer might all need access to the same base prompt but with different levels of permission. Some team members should edit; others should only read or run.

Role-based access controls and shared libraries handle this problem at the platform level. You can organize prompts by client or campaign, restrict who can modify each one, and leave inline comments for teammates reviewing creative direction. When a prompt gets updated, everyone working with it automatically pulls the latest version instead of passing files back and forth over email or Slack.

Many platforms also expose your stored prompts through an API connection, which means the prompt logic lives inside the management tool but gets called programmatically by your application or automation workflow. This keeps your creative infrastructure centralized and auditable even when your production system is firing thousands of model calls per day.

When you need it and when you do not

Not every team needs a dedicated tool for this. If you are writing one or two prompts a week for personal use, a shared document gets the job done without adding complexity. Prompt management software earns its place when your volume grows, your team expands, or the cost of a broken prompt becomes high enough to hurt a real campaign with real budget behind it.

Signs you actually need it

When your prompt library exceeds what a single document can organize clearly, you are ready for a dedicated tool. That threshold looks different for everyone, but a useful signal is this: if you spend more than a few minutes searching for a prompt you know you wrote, the system is already failing you. Other clear triggers include running paid ad campaigns where prompt quality directly affects spend efficiency, collaborating with more than two people on AI-generated content, or deploying outputs across multiple models where you need to track which version worked on which model.

If a broken prompt costs you real money or real time to diagnose, you need more structure than a shared doc can offer.

Teams working with multi-model workflows, like generating a video script in one model and then feeding scene descriptions into another, face version drift fast. When one team member updates a prompt without telling anyone and the campaign output suddenly shifts in tone or quality, you lose hours tracking the cause. A management tool prevents that entirely by keeping a full change log and flagging who edited what and when, so the diagnosis takes seconds instead of a morning.

Signs you can skip it for now

You do not need a dedicated prompt management platform if you are working solo on a single project with a small, stable set of prompts that rarely change. Early-stage creators testing AI tools for the first time will find that most platforms add friction before they add value at that stage. A well-organized folder structure with clearly named files covers most needs when your output volume stays low and consistent.

The practical rule is this: add infrastructure when the pain of not having it becomes measurable. If you are losing time, missing prompt versions, or watching teammates overwrite each other's work, that pain is your signal to act. Until then, keep it simple and invest your attention in learning what makes your prompts produce reliable output rather than in managing the tools around them.

Features that matter: versioning, eval, deployment

Not every feature a prompt management software platform advertises actually changes how your team works. Three capabilities consistently separate tools worth paying for from ones that just add noise to your workflow: version control, structured evaluation, and deployment flexibility. Getting clarity on each one before you commit to a tool saves you from switching tools six months in.

Version control for prompts

Every meaningful prompt evolves. You tweak the wording, adjust the tone, swap a model, and suddenly the output shifts. Without version history, you have no way to trace what changed or why performance dropped. Good version control gives every saved prompt a timestamped record of each edit, along with who made it, so you can roll back instantly when something breaks without spending hours reconstructing what the previous version said.

Version control also creates a natural audit trail for team accountability. When a campaign underperforms, you can check the exact prompt version that was active during that run, compare it against previous iterations, and identify the change that caused the drop. That kind of precision turns troubleshooting from guesswork into a structured process.

Evaluation and testing

Running structured evaluations inside the tool is what separates prompt management from simple storage. You want a platform that lets you test variants head to head against the same input and score them using criteria you define, whether that is tone, length, factual accuracy, or output format. This makes your creative decisions data-driven instead of based on gut feel.

When your evaluation criteria are set in advance, you eliminate the bias of judging outputs based on which one you wrote.

Scoring and logging results over time helps you build a clear record of what works for specific models and tasks. If a particular phrasing consistently scores higher for video scripts than for static ad copy, that pattern becomes visible in your evaluation data and informs how you build prompts going forward.

Deployment and integration

The best tools connect directly to your production workflows through an API rather than requiring manual copy-paste steps. Your stored, tested, and approved prompt gets called programmatically at runtime, keeping the logic centralized while your application fires thousands of model calls. You avoid version drift caused by someone pulling an outdated prompt from a personal document.

Look for platforms that support direct API access and webhook triggers, so your prompt library becomes a live part of your infrastructure rather than a reference document that teams pull from inconsistently.

Best prompt management tools compared

The prompt management software market splits cleanly into two camps: tools built for developers shipping LLM applications and tools built for marketers and creative teams generating high-volume content. Knowing which camp your workflow sits in cuts your evaluation time significantly and prevents you from paying for infrastructure you will never use.

Tools built for engineering workflows

If your team builds and deploys LLM-powered applications, you want a tool that integrates deeply with your development pipeline and handles prompt versioning through an API call. LangSmith, built by the LangChain team, gives developers full tracing, evaluation, and prompt versioning inside one platform. Langfuse is an open-source alternative that covers similar ground and works well for teams that want to self-host their infrastructure for compliance reasons. Weights and Biases includes a prompt management layer alongside its broader model monitoring suite, making it a strong option if you already track experiments there.

These tools prioritize technical depth over ease of use, so non-technical teammates will hit friction fast if they need to access or edit prompts regularly.

Tools built for content and marketing teams

For creative and marketing teams, the priorities flip. You want clean organization, fast access, and collaboration features that do not require a developer to set up. PromptLayer focuses on logging and versioning prompts across model calls and works well for teams iterating on ad copy or video scripts at volume. PromptHub takes a more document-like approach, letting teams build a shared, searchable library with annotation and commenting built in, which suits agencies managing prompts across multiple client accounts.

Agenta sits in a middle position. It handles both the technical evaluation side and the collaborative editing experience well enough that marketers and developers can work inside the same environment without one group overwhelming the other's needs.

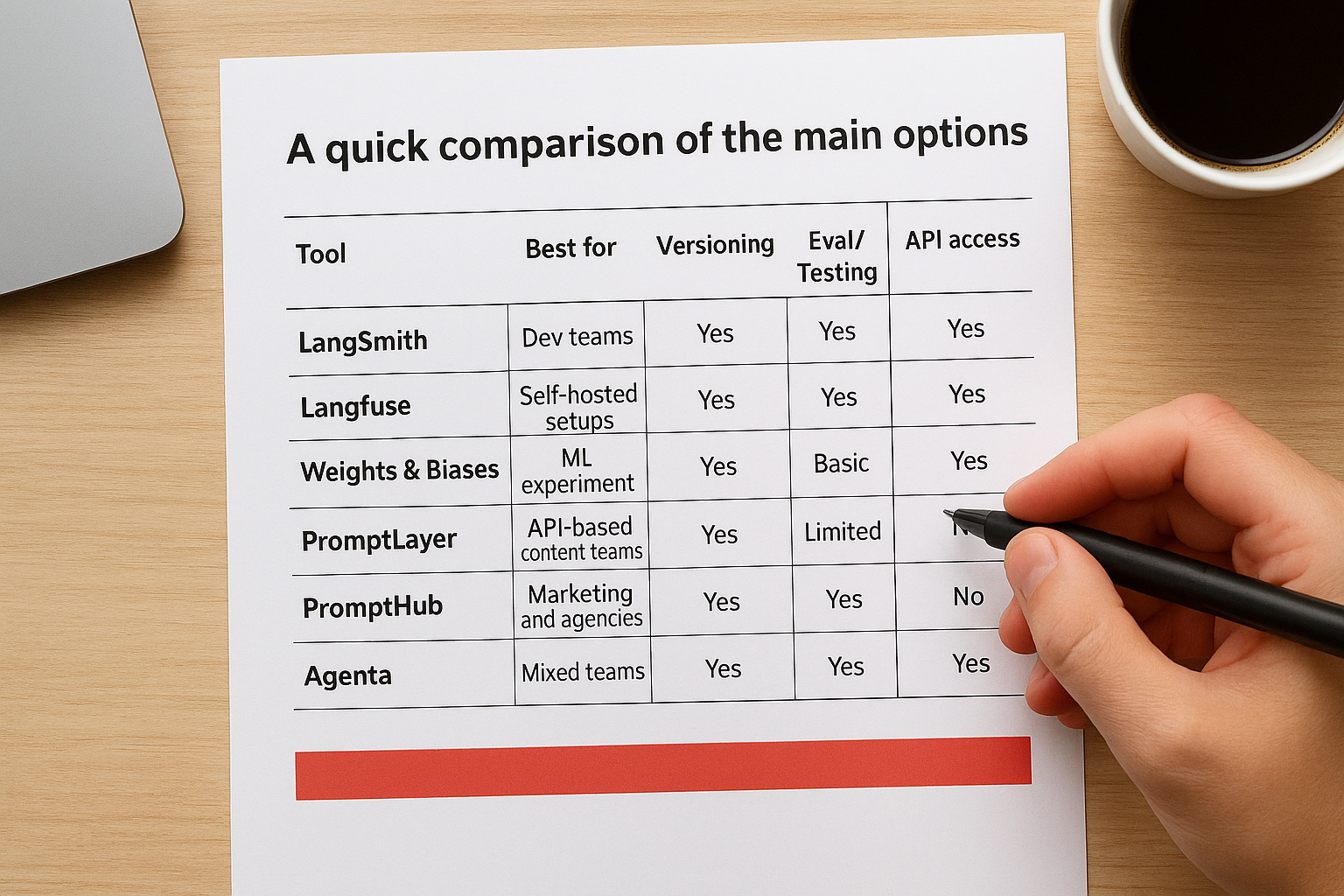

A quick comparison of the main options

The table below lays out the key differences so you can match each tool to your actual situation:

| Tool | Best for | Versioning | Eval/Testing | API access |

|---|---|---|---|---|

| LangSmith | Dev teams | Yes | Yes | Yes |

| Langfuse | Self-hosted setups | Yes | Yes | Yes |

| Weights & Biases | ML experiment tracking | Yes | Yes | Yes |

| PromptLayer | API-based content teams | Yes | Basic | Yes |

| PromptHub | Marketing and agencies | Yes | Limited | No |

| Agenta | Mixed teams | Yes | Yes | Yes |

None of these tools handles everything perfectly. Your choice comes down to whether your primary bottleneck is technical deployment or creative collaboration, and which platform your team will actually open every day once the setup is done.

How to choose and roll out a tool

Picking the right prompt management software comes down to two questions: who will actually use it, and what breaks in your current workflow without it. Every tool on the market solves a real problem, but not every tool solves your specific problem. Starting from your team's daily friction, rather than from feature marketing, puts you in a much better position to make the right call.

Match the tool to your team type

If your team is primarily technical, writing code that calls AI models programmatically, prioritize tools that offer deep API integration and tracing capabilities. LangSmith and Langfuse both connect directly to your development pipeline and log every model call, which gives your engineers the observability they need without forcing them to work outside their existing setup.

For marketing and content teams generating high volumes of creative assets, the right choice shifts toward tools with clean interfaces, easy commenting, and organized libraries. Your team members need to open the tool and find what they need in under a minute. If the interface requires developer training to navigate, adoption drops off within a few weeks regardless of how powerful the backend is.

A tool that nobody uses consistently is worse than no tool at all.

Roll it out without killing adoption

Start with a focused pilot rather than a full migration. Pick one active campaign or project, move all related prompts into the new tool, and run that single workflow through it for two to three weeks. This lets you identify friction points before they affect your entire operation and gives your team a clear, bounded scope to learn the system without feeling overwhelmed.

Once the pilot runs cleanly, migrate your most-used prompt libraries first and retire the old documents in stages. If your team relies on shared folders or documents today, keep those accessible during the transition period so no one scrambles for a prompt mid-campaign while the new system is still unfamiliar. Set a hard cutoff date for when the old system stops being updated, and communicate that date early so the team treats the new tool as the real source of truth.

Assign one person to own the library structure during rollout, naming conventions, folder organization, and tagging rules. Without that ownership, libraries fragment quickly and you end up recreating the same disorganization you were trying to escape in the first place.

Final takeaways

Prompt management software pays off the moment your prompts stop being a personal habit and start being a shared production asset. If you are running campaigns across multiple models, working with a team, or spending real budget on AI-generated creative, the cost of disorganized prompts shows up fast in wasted time and inconsistent output. The right tool gives you version history to trace what changed, structured evaluation to confirm what works, and a shared library that keeps everyone pulling from the same source of truth.

Your next step does not have to be a full migration. Pick one active project, move its prompts into a tool that fits your team type, and run it through a focused pilot. Build from there once the workflow clicks. If your work involves high-volume AI video and image creation for marketing campaigns, explore what Starpop can do for your creative production alongside the prompt system you put in place.