Contents

0%Every AI-generated image, video, or voiceover starts with the same thing: an instruction. The quality of that instruction determines whether the output is usable or a waste of time. What is prompt engineering? It's the skill of writing those instructions so that AI models consistently produce the results you actually need. And for anyone creating marketing content at scale, ads, UGC videos, product visuals, it's quickly becoming a non-negotiable skill to develop.

A well-crafted prompt can mean the difference between a generic stock-looking image and a scroll-stopping ad creative that matches your brand. A bad one burns through credits and produces assets nobody would run. This is something we see daily at Starpop, where users generate videos, images, and audio across multiple AI models from a single platform. The people who get the best results fastest are almost always the ones who understand how to prompt effectively.

This guide breaks down prompt engineering from the ground up, what it means, how it works, the core techniques behind it, and real examples you can start using right away. Whether you're generating your first AI image or trying to improve consistency across hundreds of ad variations, you'll walk away with a clear framework for writing prompts that perform.

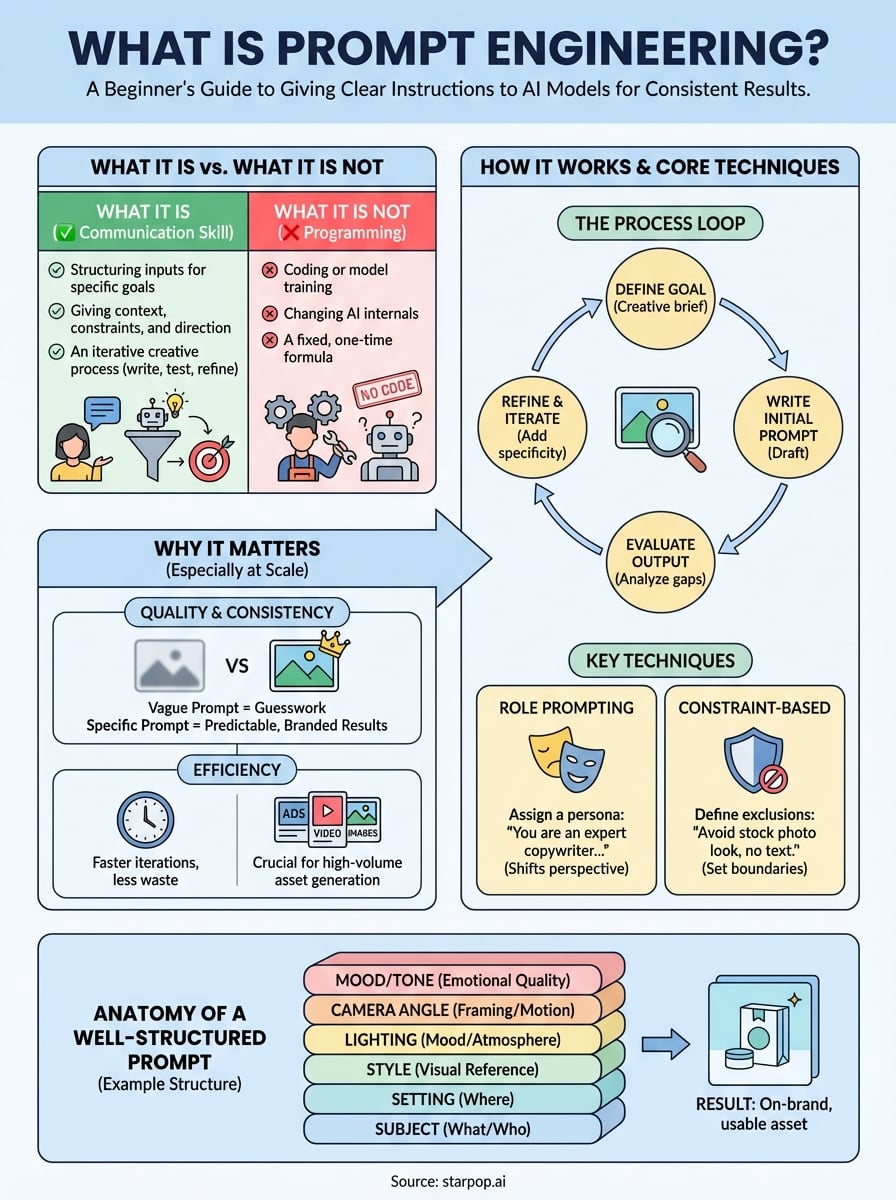

What prompt engineering is and is not

When people ask what is prompt engineering, they often expect a technical answer involving code or machine learning. The reality is simpler. Prompt engineering is the practice of structuring your inputs to an AI model in a way that reliably produces useful, high-quality outputs. It requires no programming background and no access to the model's internals. It only requires a clear understanding of what you want and the language to communicate that precisely.

What it actually is

At its core, prompt engineering is a communication skill applied to AI systems. You are giving a model context, constraints, and direction so it can generate what you need rather than guessing. A prompt can include the format you want, the tone, the subject, the style reference, and any restrictions. The more specific and structured your instructions are, the more predictable and repeatable your results become.

The difference between a vague prompt and a well-engineered one is the difference between asking a contractor to "build something nice" and handing them a detailed blueprint.

Think of it as writing a creative brief. If you were hiring a human designer, you would tell them the brand, the use case, the visual style, and the restrictions. You do the same thing with AI. Good prompts eliminate ambiguity and reduce the gap between what you imagined and what the model produces. This matters enormously when you are generating large volumes of ad assets and consistency is the goal.

What it is not

Prompt engineering is not a form of programming or model training. You are not changing how the AI works, writing code, or adjusting anything inside the system. Many beginners assume they need a technical background to do this well, but the skill is fundamentally about clarity and specificity in language, not software development. If you can write a clear instruction, you can engineer a prompt.

It is also not a fixed formula you memorize once and apply forever. Models change, platforms update their capabilities, and the same prompt can produce different results across different tools. Prompt engineering is an iterative process: you write, test, evaluate the output, and refine based on what you get back. Treating it as a one-time setup rather than an ongoing creative practice is one of the most common mistakes new users make.

Finally, prompt engineering is not the same as prompt hacking or trying to bypass a model's safety guidelines. Those are entirely separate concerns. What you are doing here is purposeful and constructive: learning to work with a model's strengths to produce outputs that serve a real business or creative goal. The focus is always on better results, not on circumventing the system.

Why prompt engineering matters for gen AI results

Understanding what is prompt engineering is one thing; understanding why it directly impacts your results is another. Generative AI models are probabilistic, meaning they produce the most statistically likely response given your input. If your input is vague, the model fills the gaps with its own assumptions, and those assumptions rarely match what you had in mind. The quality of your prompt is the single biggest variable you control, which makes it the most important lever you have for getting better outputs.

The output gap between vague and specific prompts

Every model, whether it generates video, images, or copy, responds to the specificity of your instructions. A prompt like "show a woman drinking coffee" can produce hundreds of different results depending on lighting, setting, mood, and style. A prompt that specifies a warm-lit kitchen, morning light through a window, candid handheld camera style, and a 30-something woman in casual clothing narrows that range dramatically. The gap between those two prompts is not a technical difference; it is a communication difference.

The more clearly you define the output, the less creative liberty the model takes, and the more useful and predictable your results become.

Why this becomes critical at scale

When you are generating a single image, a mediocre prompt costs you a few seconds and a retry. When you are producing 20 ad variations, multiple video formats, and localized versions across different markets, a weak prompting approach multiplies those inefficiencies fast. Brands and agencies using AI content tools at volume cannot afford to iterate blindly on every asset. You need prompts that produce reliable outputs the first or second attempt, not the seventh.

Consistent prompt quality also protects your brand's visual identity. Random outputs across a campaign create fragmented creative that dilutes recognition. A structured prompt engineering approach keeps your assets visually coherent, tonally consistent, and aligned with the audience you are targeting, regardless of how many assets you generate.

How prompt engineering works in practice

Knowing what is prompt engineering conceptually is useful, but watching it work in an actual workflow makes it click. In practice, prompt engineering follows a predictable cycle: you define your goal, write an initial prompt, evaluate what the model returns, and refine until the output matches your intent. Every step in that cycle depends on your ability to translate a visual or creative idea into specific, unambiguous language.

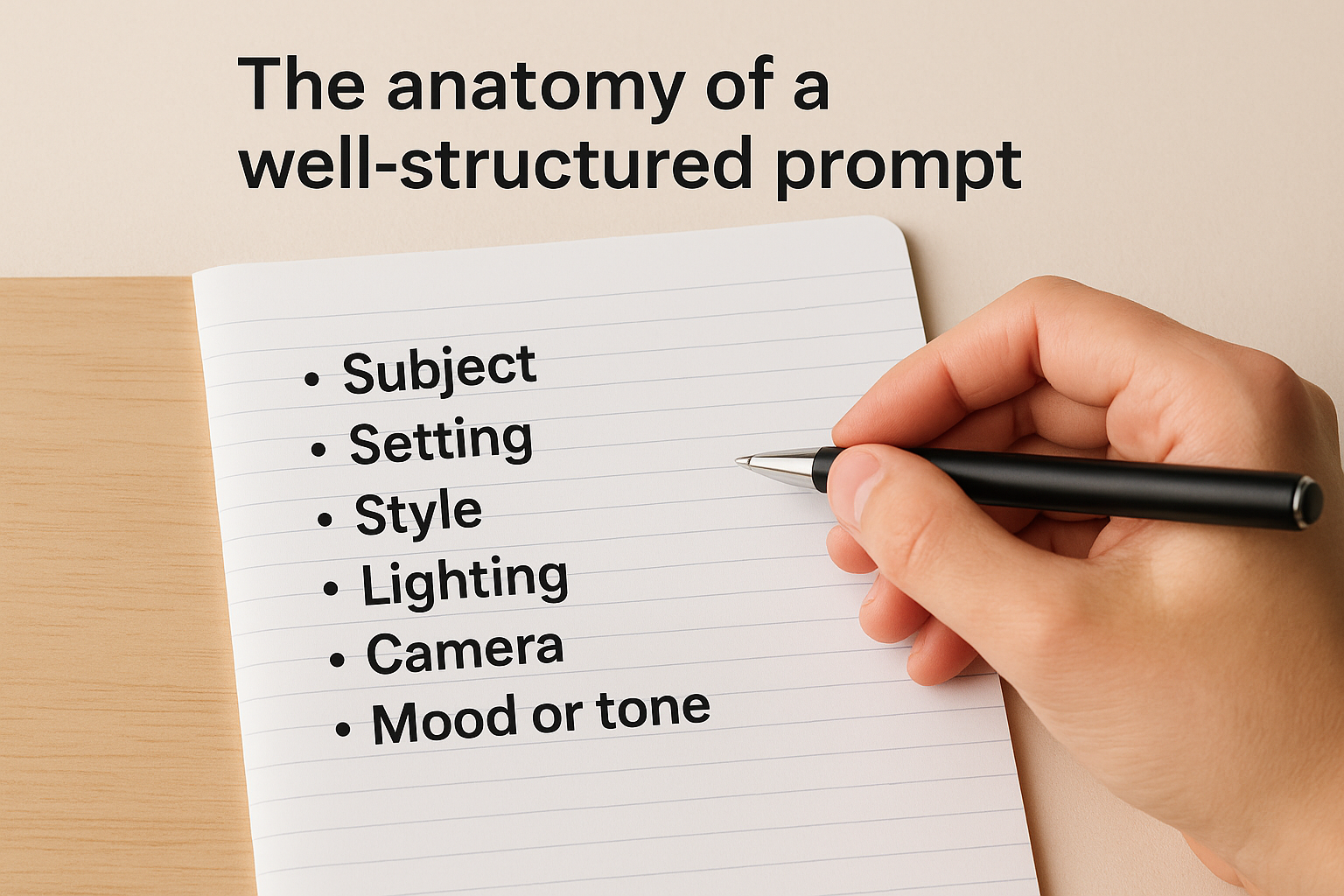

The anatomy of a well-structured prompt

A strong prompt is not a single sentence thrown at a model. It is a layered instruction that covers the key variables a model needs to reduce guesswork. For image and video generation, those layers typically include the subject, setting, style, camera angle, lighting, and mood. For copy or voiceover scripts, they include tone, audience, format, and length. Leaving any of these blank hands the decision back to the model.

The more variables you define upfront, the fewer surprises you get in the output.

Here is how those layers look in a practical structure:

- Subject: What or who is in the scene

- Setting: Where the scene takes place and the environment around the subject

- Style: Visual references, aesthetic direction, or genre (cinematic, candid, flat lay)

- Lighting: Natural, studio, golden hour, soft diffused

- Camera: Angle, distance, motion (overhead shot, close-up, slow push-in)

- Mood or tone: The emotional quality you want the output to carry

The iteration loop

Writing a prompt once and expecting a perfect result is unrealistic. Most experienced users treat the first output as a rough draft and use it as a reference point for tightening their instructions. You look at what the model emphasized, what it missed, and where it defaulted to generic choices, then you add specificity to address each gap.

Each iteration sharpens your prompt vocabulary and builds a personal library of language that works reliably for your specific use cases.

Prompt engineering techniques you can use today

Once you understand what is prompt engineering at a conceptual level, the next step is applying specific techniques that produce measurable improvements. These are not abstract theories; they are practical methods you can apply to your next prompt. Each one reduces ambiguity and increases the model's ability to produce exactly what you need, without extra retry cycles.

Role prompting

Role prompting means instructing the model to respond from a specific perspective before it generates anything. You open the prompt by assigning a role: "You are a direct-response copywriter specializing in e-commerce ads." This single addition shifts the model's default behavior toward outputs that match a professional standard rather than a generic one.

The role you assign acts as a filter for every decision the model makes throughout the rest of the prompt.

Combining a clear role with your actual instruction eliminates a significant amount of stylistic guesswork from the model's output. It applies that lens consistently, which is especially useful when you need copy, scripts, or descriptions that carry a specific voice across multiple assets without manual cleanup afterward.

Constraint-based prompting

Constraint-based prompting means telling the model what to exclude alongside what you want. Constraints matter as much as instructions because they prevent the model from defaulting to filler choices you never asked for. Specifying "no stock photography aesthetic, no text overlays, no props other than the product" gives a visual model a defined boundary to work within, which produces tighter outputs from the start.

You apply constraints by adding a short "avoid" section at the end of your prompt. List the visual or tonal elements that would make the output unusable for your use case: specific styles, color palettes, compositions, or tones that conflict with your brand. This single habit cuts your iteration cycles and keeps generated assets on-brand without requiring manual correction after every generation.

Examples and ready-to-adapt prompt patterns

Reading about what is prompt engineering only gets you so far. Seeing real prompt structures you can modify and use immediately is where things start to click. The two patterns below cover the most common use cases for performance marketers: a UGC-style video ad and a product image. Use them as starting points, swap in your own product details, and adjust each variable until the output matches your creative direction.

A UGC-style video ad prompt

This format works well for direct-response ads on TikTok and Instagram. The goal is a candid, authentic feel that does not look produced, so the prompt needs to signal that style explicitly rather than letting the model default to polished commercial aesthetics.

The fastest way to improve a UGC prompt is to describe the creator, not just the product.

Here is a pattern you can adapt:

- Creator: 28-year-old woman, natural makeup, casual clothing, speaking directly to camera

- Setting: Home kitchen, natural window light, slightly cluttered countertop in background

- Action: Holding product, explaining one specific benefit in a conversational tone

- Camera style: Handheld, slight movement, close-medium framing

- Avoid: Studio lighting, text overlays, stock footage aesthetic, branded backgrounds

A product image prompt

Product image prompts need precise visual control because small differences in lighting or angle change how a product reads to a buyer. Build your prompt around the exact composition you want rather than describing the product alone.

Use this structure as your base:

- Subject: [Product name], [color], [material finish]

- Setting: Minimal white surface, soft diffused studio light from upper left

- Framing: Three-quarter angle, slight elevation, product centered with negative space on right

- Mood: Clean, modern, high-end but approachable

- Avoid: Shadows that obscure product detail, props that distract, over-saturated colors

Adjusting one variable at a time between generations lets you isolate what is working and build a reliable prompt you can reuse across an entire product line.

Next steps

Now that you have a clear answer to what is prompt engineering and the techniques behind it, the most productive thing you can do is start applying them immediately. Pick one prompt pattern from the examples above, swap in your product details, and run a few generations using the layered structure. Do not try to perfect every variable at once; focus on one element per iteration and pay attention to how each change shifts the output. That hands-on practice builds intuition faster than any amount of reading.

If you want to put these techniques to work across video, image, and audio generation without jumping between multiple tools, Starpop gives you access to the top AI models in a single workspace built specifically for marketing content. You can generate batch assets, analyze viral ad formats, and apply everything you have learned here directly to campaigns that need to perform.