Contents

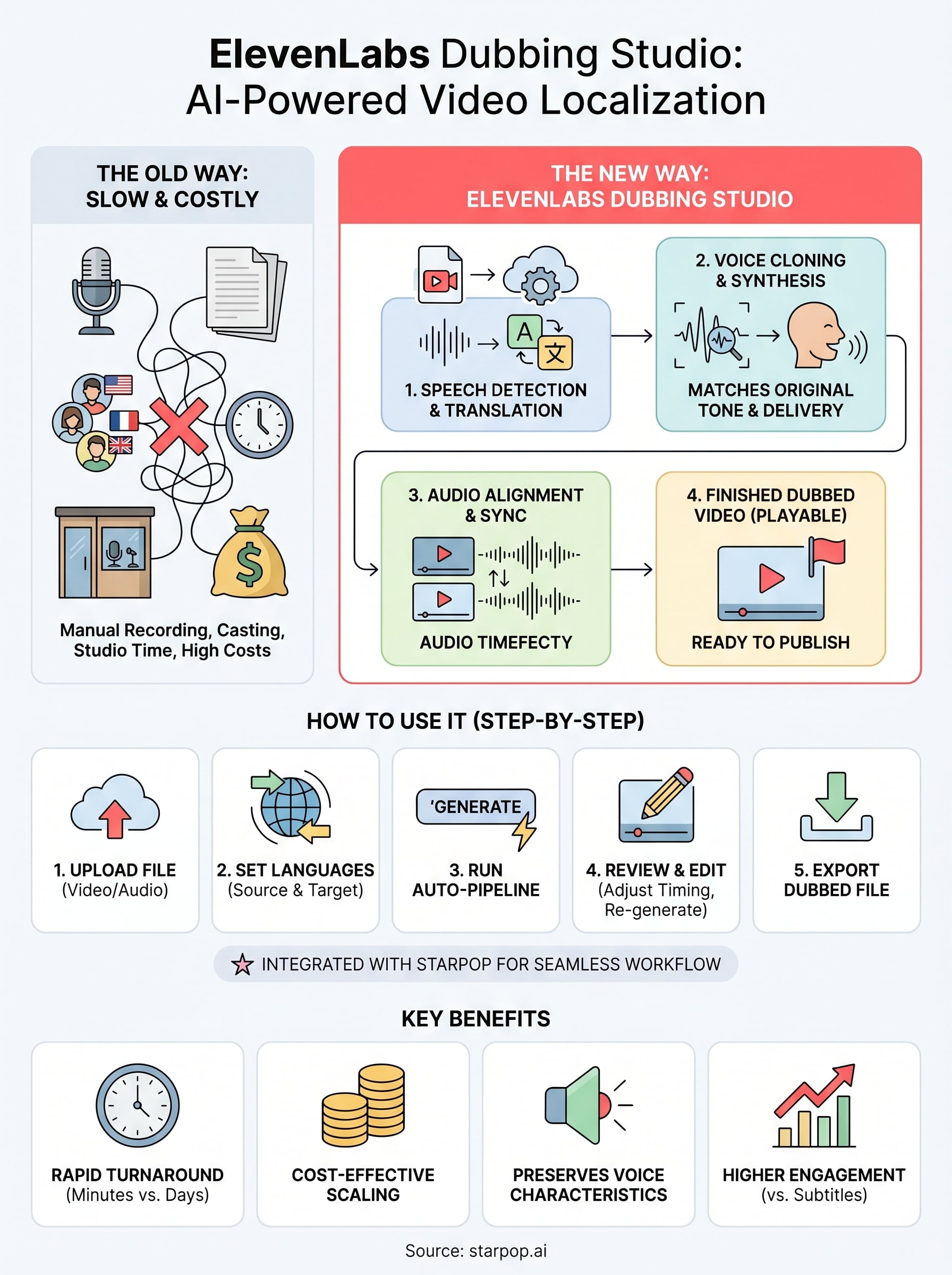

0%Translating video content used to mean re-recording every line with a new voice actor for each language. The ElevenLabs Dubbing Studio changed that by using AI to automatically translate, voice-match, and lip-sync video content across dozens of languages, all while keeping the original speaker's tone and delivery intact.

For e-commerce brands and agencies running ads across international markets, this is a big deal. Instead of producing separate video assets per region, you can dub a single ad into 50+ languages in minutes. No casting. No re-shoots. No bloated localization budgets.

At Starpop, we integrate ElevenLabs directly into our platform, so you can go from generating an AI video ad to dubbing it for global audiences without switching tools. It's one of the core reasons our users can scale campaigns internationally without the typical production overhead.

This article breaks down exactly how the ElevenLabs Dubbing Studio works, what it can (and can't) do, and how to start using it, whether standalone or as part of a broader AI content workflow.

What ElevenLabs Dubbing Studio is

The ElevenLabs Dubbing Studio is an AI-powered localization tool that takes a video or audio file and automatically produces a dubbed version in a target language, using a voice that matches the original speaker's characteristics. That means the system preserves tone, pacing, and vocal texture from the source recording. You don't get a generic text-to-speech replacement. Instead, the tool clones the speaker's voice from the uploaded content and regenerates the audio in the new language, keeping the original delivery intact as closely as the technology allows.

The end result is a dubbed video where the new audio sounds like the original speaker actually recorded it in that language, not a robotic substitute.

How it differs from standard translation tools

Standard translation tools give you a transcript or subtitles. The ElevenLabs Dubbing Studio goes further by generating new audio, synchronizing it with the video timeline, and outputting a finished dubbed file ready to publish. You get a playable video with the translated speech already embedded, not a text file you still have to hand off to a voice actor. The tool handles speech detection, translation, voice synthesis, and timing alignment as a connected pipeline rather than four separate manual steps.

For ad content at scale, this matters. Rebuilding each video asset from scratch for every target language is a production bottleneck most teams cannot sustain without significant budget and time.

What the studio interface gives you

Beyond the automated pipeline, the studio interface lets you edit the output manually. You can review the translated script segment by segment, adjust timing on individual lines, and re-generate specific phrases if the output is off. This gives you direct editorial control over the final dubbed file rather than forcing you to accept whatever the automation produces on the first pass.

Why AI dubbing matters for video localization

Running ads in only one language caps your addressable market from day one. If you want real international reach, you need localized video content, and the traditional dubbing process is neither fast nor cheap.

Professional dubbing for a single 30-second ad across five languages can cost several thousand dollars once you account for voice casting, studio time, and audio sync work.

The real cost of skipping localization

Manual dubbing requires hiring native voice actors for each language, coordinating recording sessions, and re-syncing the new audio to match the original video timeline frame by frame. For agencies managing multiple clients and ad variations, that workflow does not scale without a significant budget increase. Most teams default to subtitles instead, but dubbed content consistently outperforms subtitled video on completion rates and paid social engagement metrics.

Skipping proper localization also means your ad creative cannot adapt to regional audience preferences, which directly affects conversion rates in non-English markets. The ElevenLabs Dubbing Studio removes that barrier by automating the voice-matching and translation pipeline, so you can localize an ad in minutes rather than waiting days for a production team to turn around a single language version for each target region.

How ElevenLabs Dubbing Studio works

The ElevenLabs Dubbing Studio runs on a four-stage automated pipeline: speech detection, translation, voice synthesis, and audio alignment. When you upload a video file, the system isolates the speech track from background audio, transcribes it, and routes that transcript through its translation engine. From there, it synthesizes the translated dialogue using a voice clone built from the original speaker, then maps the new audio back to the original video timeline.

The system handles every stage automatically, so you receive a finished dubbed file without manually managing any individual step.

How voice cloning fits into the process

Voice cloning is what separates AI dubbing from a basic text-to-speech swap. The studio samples your uploaded audio to extract the speaker's pitch, cadence, and tone. It then uses those captured characteristics to generate the translated speech in a matching vocal style, not a flat synthetic substitute. If you upload an ad with a high-energy presenter, the dubbed version reflects that energy in the target language rather than defaulting to a monotone output.

Each language version also gets its own timing track, since translated speech rarely runs the same length as the source. The studio adjusts phrase boundaries automatically to keep dubbed audio aligned with the video without requiring manual timeline edits from you.

How to use ElevenLabs Dubbing Studio step by step

Getting started with the ElevenLabs Dubbing Studio is straightforward once you know the input requirements and where to find the controls. You need a video or audio file, a source language, and a target language. That is the full setup.

The core workflow

Follow these steps to produce your first dubbed video from any uploaded source file:

- Upload your file: Go to the Dubbing Studio in your ElevenLabs account and upload your video or audio file. The system accepts most common formats.

- Set your languages: Select the source language of your original content and the target language you want to dub into.

- Run the dub: Hit generate. The pipeline handles transcription, translation, voice cloning, and audio alignment automatically.

- Review in the editor: Open the studio editor to check each dubbed segment, adjust timing, and re-generate any line that sounds off.

- Export: Download the finished dubbed video file, ready to publish or hand off to your media buyer.

You can dub into multiple target languages from a single upload, so one source file becomes a full set of localized assets without re-uploading.

Quality, legal, and workflow best practices

The ElevenLabs Dubbing Studio produces solid output automatically, but a few practices will consistently improve the quality of your final dubbed assets. Starting with clean source audio is the most important factor, since background noise and overlapping speech reduce the accuracy of both transcription and voice cloning.

Review every translated segment before publishing

Always open the studio editor after your dub completes. Automated translation handles most content accurately, but technical product terms, brand names, and region-specific phrasing sometimes need manual corrections. Re-generating individual lines takes seconds and prevents errors from reaching your live ads.

Understand the legal requirements for AI-generated voices

Before dubbing content featuring real speakers, confirm you have explicit consent from every person whose voice gets cloned. Most jurisdictions treat voice likeness as a personal right, and publishing AI-dubbed content without that consent carries real legal exposure.

For AI-generated presenters with no real person behind the voice, you own full rights to the output and can publish freely across any market.

Batch your localization work for efficiency

Rather than dubbing one language at a time, run multiple target languages from a single upload in one session. This approach saves both time and credits, which matters when you are managing ad sets across several international markets simultaneously.

What to do next

You now have a complete picture of how the ElevenLabs Dubbing Studio works, what it outputs, and how to use it without making avoidable mistakes. The next step is to actually run a dub on a real piece of content. Take any video ad you are already running in English, upload it, pick two or three target markets, and compare the dubbed versions against your subtitled fallbacks. The performance difference across paid social campaigns will show you quickly whether localized audio is worth the extra step for your specific audience.

If you want to skip the back-and-forth between separate tools, Starpop brings ElevenLabs directly into your content workflow, so you can generate an AI video ad and dub it for global markets inside one platform. No extra subscriptions or manual file transfers required. Start creating and dubbing AI video ads with Starpop and see how fast your localization pipeline can actually move.