Contents

0%ElevenLabs dubbing is one of the most practical AI tools to emerge for anyone producing multilingual video content. It takes a source video, translates the speech, and generates a dubbed version that preserves the original speaker's voice, emotion, and timing, no voice actors, no recording studios, no weeks of back-and-forth with localization vendors. For e-commerce brands and agencies running ads across multiple markets, that's a serious unlock.

But how does it actually work under the hood? What are the real limitations? And when does it make sense to use it versus other approaches? Those are the questions worth answering before you commit credits or budget to any dubbing workflow. We've spent considerable time working with ElevenLabs' models inside Starpop, our AI content creation platform, where dubbing and voice tools are part of the integrated pipeline our users rely on to produce and localize marketing assets at scale.

This article breaks down exactly how ElevenLabs dubbing functions in 2026, what it's best suited for, where it falls short, and how to get the most out of it for real production work.

What ElevenLabs dubbing does

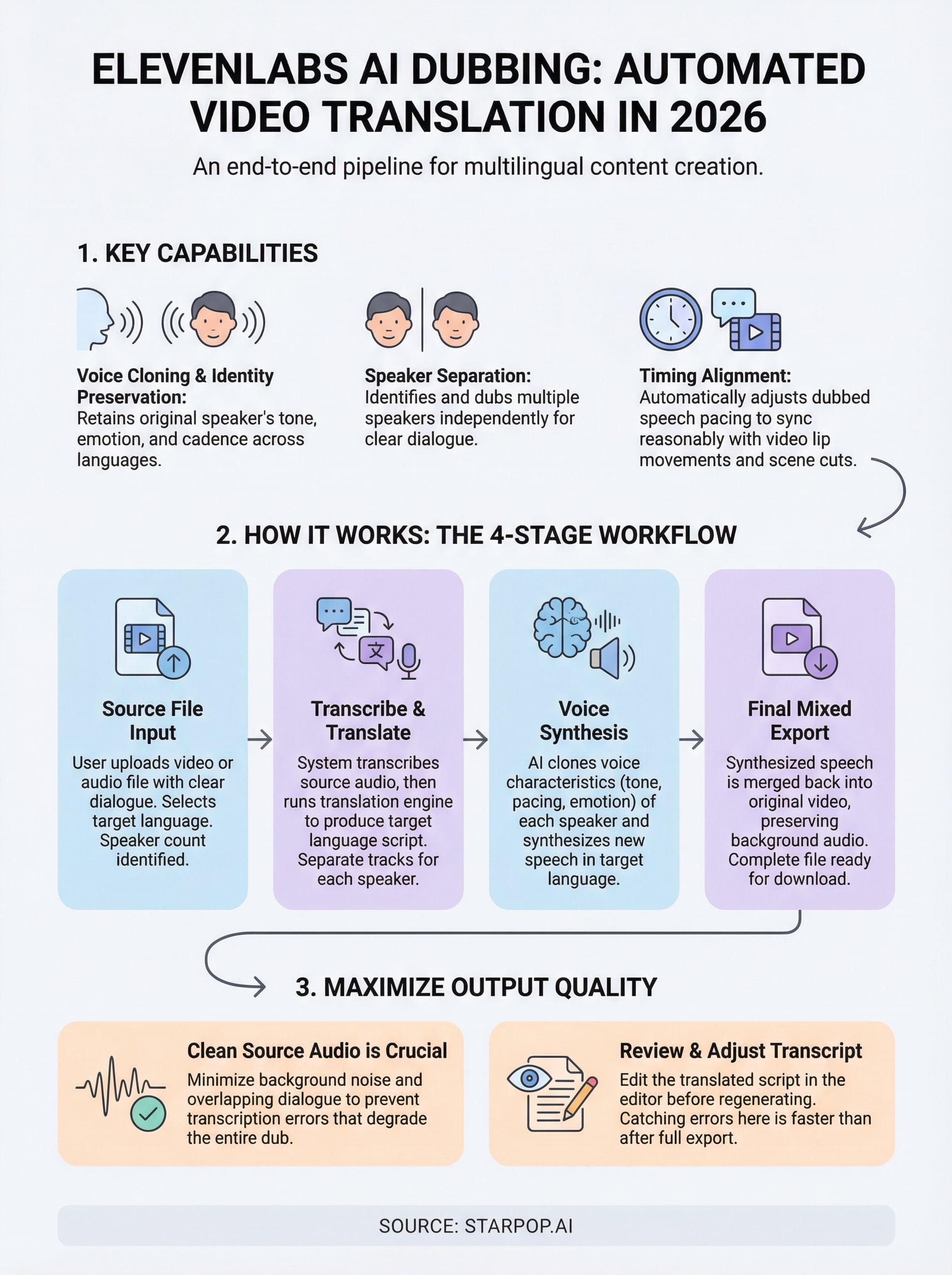

ElevenLabs dubbing is a fully automated translation and voice synthesis pipeline built into ElevenLabs' platform. You feed it a source video or audio file, select a target language, and it returns a dubbed version where the translated speech sounds like the original speaker, not a generic text-to-speech voice. The system handles transcription, translation, voice cloning, and audio mixing in one workflow rather than requiring you to stitch those steps together manually.

Voice cloning and speaker identity

The most important thing ElevenLabs dubbing does is preserve speaker identity across languages. When the system processes your video, it captures the voice characteristics of whoever is speaking, including tone, cadence, and emotional inflection. It then applies those characteristics to the translated speech output. If your original speaker sounds enthusiastic and fast-paced in English, the dubbed Spanish version carries the same energy.

This means your audience in a different market hears content that feels authentic rather than clearly synthetic.

Speaker separation and timing alignment

When a video contains multiple speakers, ElevenLabs dubbing identifies and separates each voice individually. It dubs each speaker independently, so you don't end up with one blended voice covering dialogue between two people. The system also handles timing alignment, adjusting the pacing of dubbed speech so it fits reasonably close to the lip movements and scene cuts in your original video. This is a non-trivial technical challenge, and while it's not perfect, it performs well enough for most short-form ad content.

Your final output is a complete video file with the dubbed audio already mixed in, ready for review and export. You don't need to handle audio layering separately in a video editor, which removes a step that would otherwise add friction to a high-volume production workflow.

Why creators use AI dubbing

Traditional dubbing was always an option reserved for large production budgets. Hiring voice actors, booking studios, coordinating with translators, and syncing everything together took weeks and cost thousands per language per video. Most brands running performance ads simply didn't have that runway, so they skipped localization entirely and left money on the table in markets where their product had real demand.

AI dubbing changes the math entirely by collapsing what used to be a multi-week workflow into a process measured in minutes.

The cost of traditional localization

When you factor in translation, voice recording, and post-production audio work, dubbing a single two-minute video into five languages through a traditional vendor can easily run $10,000 or more. That number makes rapid iteration impossible. You can't A/B test localized creatives at that price point.

Speed and scale advantages

ElevenLabs dubbing gives you the ability to test localized ads in a new market the same day you decide to enter it. For e-commerce brands running paid social campaigns, that speed directly affects your cost per acquisition because you're not waiting on a vendor pipeline before you can start learning what resonates with a new audience. Faster iteration means faster optimization.

How ElevenLabs dubbing works

The process runs through several discrete stages automatically once you submit a file. Understanding what happens at each step helps you predict output quality and catch potential issues before you spend time reviewing a finished dub.

From transcript to translated script

ElevenLabs dubbing starts by transcribing the source audio using its built-in speech recognition layer. Once it has an accurate transcript, it runs that text through a translation engine to produce the target language script. The system also identifies how many speakers are present and assigns separate tracks to each one. Clean audio input matters significantly here because transcription errors carry forward into the translation and degrade the entire output.

- Noisy source audio increases transcription errors

- Multiple overlapping speakers reduce speaker separation accuracy

- Short clips under 30 seconds tend to produce cleaner results

Voice synthesis and final export

After translation, the system clones the voice characteristics of each identified speaker and synthesizes new speech in the target language using those parameters. This is where tone, pacing, and emotional delivery transfer from the original performance into the dubbed version. The final stage merges that synthesized speech back with the original video, preserving background audio while swapping out the spoken track. You receive a complete mixed file ready for download.

This end-to-end automation is what makes ElevenLabs dubbing viable for high-volume ad production rather than just one-off projects.

How to dub a video step by step

Running ElevenLabs dubbing through its native interface is straightforward, but your output quality depends heavily on the decisions you make before you hit submit. Following a consistent process keeps your results predictable across projects.

Prepare and upload your source file

Your first priority is clean source material. Before uploading, check that your video has clear audio with minimal background noise, no overlapping dialogue, and a consistent volume level throughout. Once you're satisfied with the file, upload it directly to ElevenLabs, then select your target language from the available options.

Spending two minutes reviewing your source audio before upload saves you from reviewing a flawed dub after the fact.

You'll also want to confirm the speaker count setting matches your actual video so the system separates voices correctly.

Review, adjust, and export

After the system processes your file, you receive a draft dub with an editable transcript alongside it. Read through the translated script before you listen, because catching obvious translation errors at this stage is faster than spotting them during playback. Make any text corrections directly in the editor, regenerate the affected segments, then export your final mixed video file once you're satisfied with the result.

Common issues and how to fix them

Even with a polished workflow, ElevenLabs dubbing produces imperfect results in specific situations. Knowing the most common failure points lets you fix them quickly instead of reprocessing entire files from scratch.

Transcription errors in the source

Noisy audio is the single most frequent cause of a degraded dub. If your source recording includes background music, ambient noise, or compressed audio artifacts, the transcription layer picks up errors that carry directly into your translated script and synthesized voice output. Fix this by running your source audio through a noise reduction tool before uploading, then re-check the transcript once the dub completes and correct any remaining errors in the editor before regenerating affected segments.

Fixing a three-word transcription error takes seconds; catching it after full export costs you a full reprocessing cycle.

Timing drift on longer videos

Pacing mismatches between the dubbed speech and the original lip movements become more noticeable as video length increases. Translated text often runs longer than the source language, which pushes the audio out of sync with on-screen action. You can reduce this by keeping your source scripts concise and trimming any padding from your original video before you submit it for dubbing.

Next steps

ElevenLabs dubbing gives you a real path to localized video content without the production overhead that made multilingual marketing impractical for most brands. You now understand how the pipeline works, where it performs well, and which inputs give you the best output. The key is applying that knowledge consistently: start with clean source audio, review transcripts before regenerating, and keep your source scripts tight to avoid timing drift on longer clips.

If you want to run dubbing alongside your broader ad production workflow, Starpop integrates ElevenLabs' voice tools directly into an all-in-one platform built for performance marketing. Rather than switching between separate tools for video creation, image generation, and localization, you handle everything in one place. That matters when you're producing at volume and need your creative pipeline to stay fast and organized. Try AI dubbing and video creation with Starpop and see how much faster your localization workflow can move.