Contents

0%ElevenLabs produces some of the most natural-sounding AI voices available right now, but voice alone doesn't make a video. To create talking-head content that actually looks convincing, you need ElevenLabs lip sync, a way to match that audio to realistic facial movements. Whether you're producing UGC-style ads, product demos, or multilingual social content, getting the lip sync right is the difference between a scroll-stopper and something viewers immediately skip.

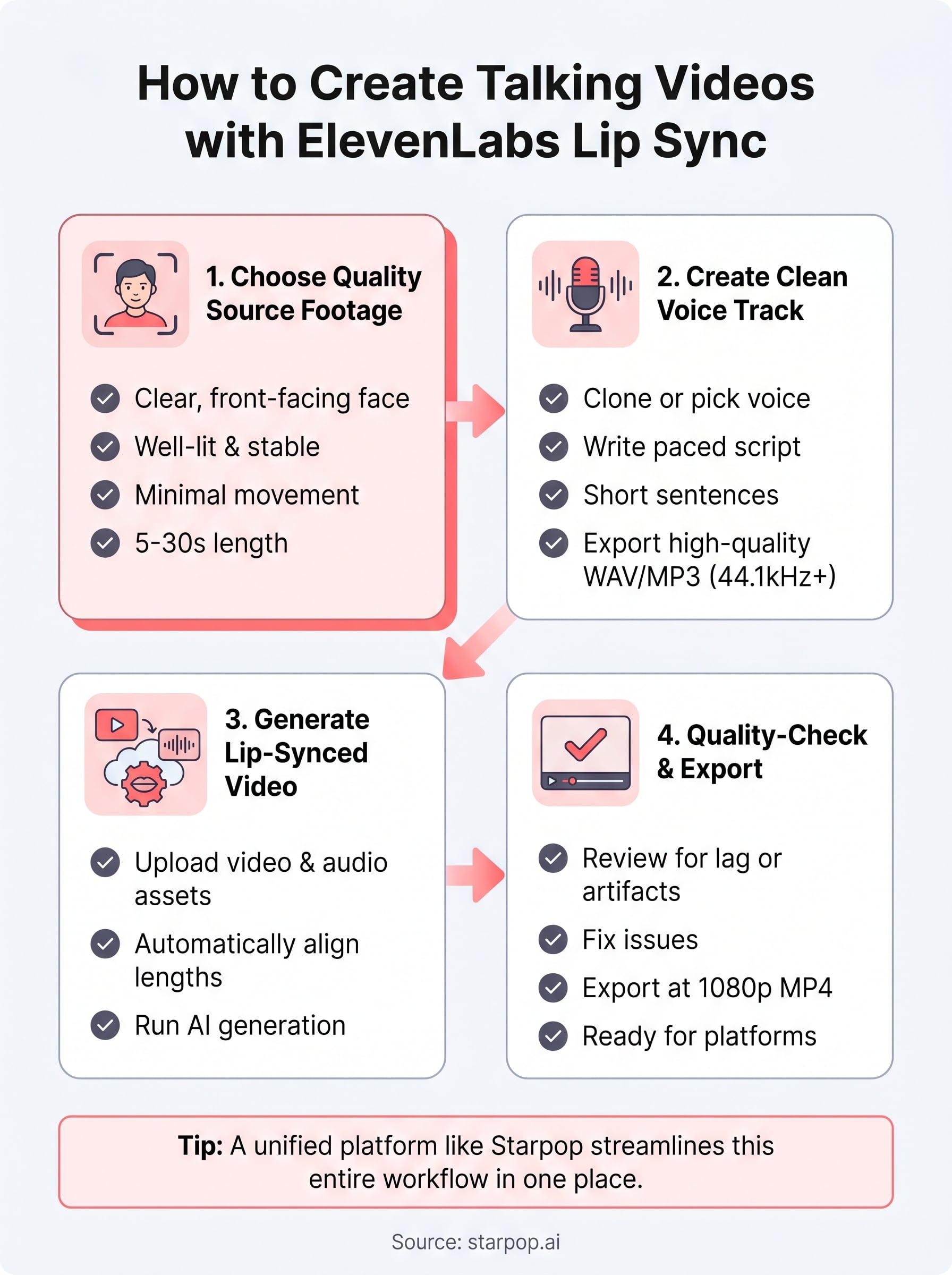

The challenge is that building a full talking video involves multiple steps: generating or cloning a voice, syncing it to a character's mouth movements, and producing the final visual asset. Doing this across separate tools gets messy fast, especially when you're testing dozens of ad variations or localizing content across markets. That's exactly why platforms like Starpop exist, we bundle ElevenLabs alongside other frontier AI models into a single workflow where you can go from voice generation to lip-synced video without jumping between tabs.

This guide walks you through how ElevenLabs lip sync works, what it can (and can't) do natively, and how to pair it with video generation tools to produce full talking videos. You'll also see how Starpop's integrated pipeline handles the entire process in one place, so you can ship finished content faster without stitching together a Frankenstein stack of AI tools.

What ElevenLabs lip sync does and when to use it

ElevenLabs is primarily a voice generation platform: you clone a voice, type a script, and get back a studio-quality audio file. Lip sync is a separate layer that takes that audio and maps it to a video of a human face so the mouth movements match what's being said. The elevenlabs lip sync capability matters most when you want the final output to look like a real person talking directly to camera, not just a voiceover playing on top of static footage or a product slideshow.

What the technology actually does

At its core, lip sync AI analyzes each phoneme in the audio track and adjusts the shape of the mouth in the video frame by frame to match. ElevenLabs offers a native lip sync tool, but the quality and flexibility of the output depend heavily on the source material you feed it. Cleaner source footage with a forward-facing subject at a consistent angle produces far more convincing results than low-resolution or heavily cropped clips.

The more stable and well-lit your source video, the less time you'll spend fixing awkward mouth movements in post.

When to use lip sync for your content

Lip sync adds real value in specific scenarios rather than every project. Knowing where it fits saves you time and credits. Use it when you need to:

- Localize ad creative into multiple languages without re-shooting with a human actor

- Produce UGC-style talking-head ads where direct delivery builds trust with the viewer

- Scale product demos across multiple markets or SKUs without hiring different spokespeople each time

- Test multiple script variations on the same character without additional production costs

When your video doesn't feature a speaking face, lip sync is irrelevant. For performance marketing where direct-to-camera delivery drives conversions, though, it's one of the highest-leverage tools in your stack.

Step 1. Choose the right source footage or image

The quality of your source material determines how convincing the final elevenlabs lip sync output looks. You can have a perfect voice track, but if your footage is shaky, poorly lit, or shot at an angle, the lip sync will look off and viewers will notice immediately. Start with the right visual input before you do anything else.

What works best as source material

Your source video or image needs to meet a few basic criteria to get clean results. The subject's face should be clearly visible, front-facing, and well-lit with minimal motion blur. Avoid clips where the person's head moves dramatically or where there's heavy background movement, since the sync algorithm needs stable reference points to work from.

A 5-10 second clip of someone looking directly at camera in a neutral position gives you the best baseline to work from.

Use this checklist before uploading:

- Resolution: 1080p or higher

- Face angle: Front-facing within 15 degrees

- Lighting: Even, no harsh shadows across the face

- Background: Clean or blurred to reduce visual noise

- Length: 5 to 30 seconds for most ad formats

Step 2. Create the voice track in ElevenLabs

Once your footage is ready, open ElevenLabs and set up your voice track. For elevenlabs lip sync to work well, the audio needs to be clean and properly paced before you export it. Rushing through voice generation produces audio artifacts that make the sync look worse, even if your footage is perfect.

Pick your voice and write the script

Choose a voice that fits your audience and brand tone. ElevenLabs gives you access to pre-built voices or the option to clone your own from a short sample recording. Keep your script concise: aim for one clear sentence per thought, with natural pauses built in using commas or line breaks in the text input. This gives the lip sync algorithm cleaner audio segments to map against.

Short, punchy sentences with clear pauses produce noticeably more natural lip sync results than long, run-on lines.

Export settings that matter

Before downloading, set the audio format to MP3 or WAV at 44.1kHz or higher. Avoid low-bitrate exports since they introduce compression artifacts that the sync algorithm can misread. Match the audio duration to your source clip length to minimize trimming work later.

Step 3. Generate a lip-synced talking video

With your footage and audio ready, you can now run the elevenlabs lip sync process. If you're working inside Starpop, upload both files directly to the lip sync tool in your workspace. The platform routes your assets through studio-grade lip-sync processing without requiring you to export to a third-party app or manage API credentials manually.

Upload and match your assets

Drag your source video into the video slot and your ElevenLabs audio file into the audio slot. Starpop automatically aligns the audio length to your video clip, so if there's a minor duration mismatch, it trims or pads the output rather than leaving you with awkward silence or cutoffs. Double-check that the face in your footage is clearly visible in the first frame before you submit.

Submitting a clear, front-facing first frame helps the sync engine lock onto facial landmarks faster and reduces processing errors.

Run the generation and review the preview

Hit generate and let the model process the clip. Most short-form ad clips under 30 seconds complete in under two minutes. When the preview loads, scrub through the full clip at normal speed rather than frame by frame. Natural-speed review catches unnatural mouth movements that frame-by-frame inspection often misses.

Step 4. Export, quality-check, and fix issues fast

After the elevenlabs lip sync processing completes, resist the urge to download and publish immediately. A quick quality-check pass catches the most common problems before your content goes live, saving you a full regeneration later.

Check for common lip sync issues

Watch the full clip at normal playback speed and look for these specific problems before you export:

- Mouth lag: Audio plays ahead of or behind the visible mouth movement by more than one frame

- Teeth artifacts: Unnatural smearing or blurring around the mouth region during fast speech

- Frozen frames: A brief moment where the face goes static while audio continues

- Audio clipping: Sudden cuts or pops in the voice track that disrupt sync accuracy

If you spot mouth lag, re-upload your audio with a 100ms silence added at the start using any free audio editor, then regenerate.

Export the final file

Once the clip passes your review, export at 1080p minimum to preserve the quality of the lip sync rendering. Choose MP4 with H.264 encoding for the broadest compatibility across ad platforms, including Meta, TikTok, and YouTube. Avoid re-compressing the exported file after download, since each compression pass degrades the mouth region noticeably and undoes the work you put into clean source footage.

Next steps

You now have a complete picture of how elevenlabs lip sync works, where it fits into a real production workflow, and what separates a clean final output from one that looks off. The process comes down to three things: quality source footage, a well-paced audio track, and a sync tool that handles the rendering without forcing you to manage a chain of separate apps.

From here, the fastest move is to put this into practice with a real ad or social clip rather than a test project. Pick one piece of content you already planned to produce, apply the steps in this guide, and use the quality-check process before you export. Every pass through the workflow makes the next one faster.

If you want to run the full pipeline in one place, from voice generation to lip sync to final export, try Starpop's AI video and lip sync tools and see how much faster you ship finished content.